Decentralizing an Organization: Beyond Data Mesh

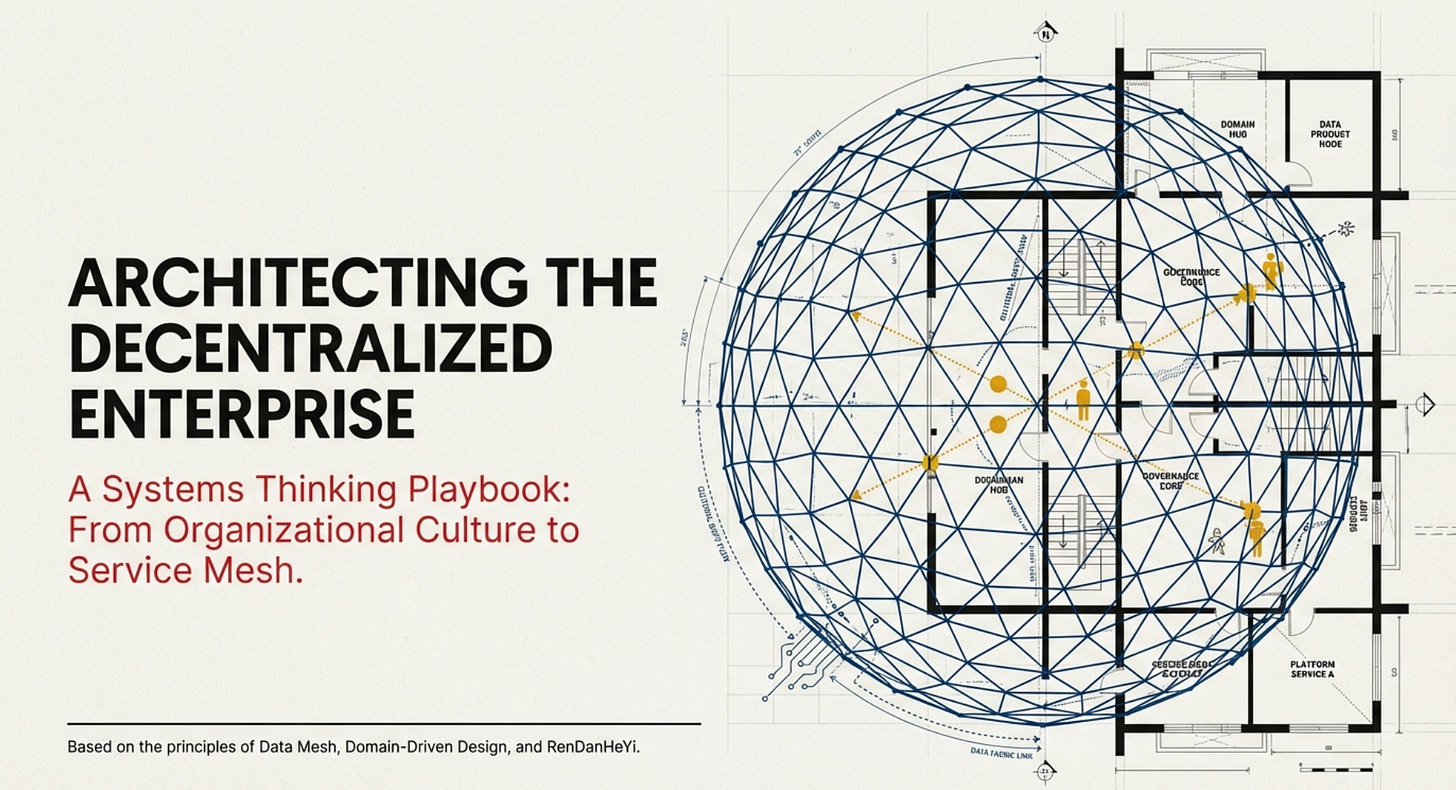

Architecting the decentralized enterprise, the symbiotic exchanges between data mesh and "service mesh," and the role of unified data platforms

About Our Contributing Expert

Vlad Radziuk | Account CTO

Vlad Radziuk is a transformation leader and systems thinker working at Nordcloud, an IBM company. He operates at the intersection of modernisation, competence centres, and AI-driven enterprise foundations with a strong focus on ontologies, knowledge graphs, and structured modelling.

With an interdisciplinary background spanning software engineering, cloud-native architecture, and enterprise data strategy, Vlad has built and led innovation units and competence centres of up to 50 professionals. As Account CTO and former Practice Lead, he combines delivery leadership with long-term architectural vision, shaping AI-powered portfolios, establishing internal knowledge ecosystems, and guiding complex transformation programs.

Beyond corporate leadership, Vlad is an active contributor to the data and knowledge graph community. He writes and researches topics such as the business case for ontologies, cross-domain metadata management, digital sovereignty, and the economics of AI integration. His thinking reflects a systemic lens: technology only creates value when aligned with governance, culture, and long-term strategy. We’re thrilled to feature his unique insights on Modern Data 101!

We actively collaborate with data experts to bring the best resources to a 15,000+ strong community of data leaders and practitioners. If you have something to share, reach out!

🫴🏻 Share your ideas and work: community@moderndata101.com

*Note: Opinions expressed in contributions are not our own and are only curated by us for broader access and discussion. All submissions are vetted for quality & relevance. We keep it information-first and do not support any promotions, paid or otherwise.

Let’s Dive In

“Data mesh doesn’t make much sense without a service mesh.”

What might sound like a bunch of buzzwords actually turned out to be a valuable lesson in systems thinking. I heard this statement during a discussion with a group of enterprise architects about how data mesh could support decentralizing their enterprise.

The challenges they described were familiar: bottlenecks, siloed data, and the all-too-common lament, “We’re sitting on mountains of data but extracting little value from it.”

If you’re a data specialist, these issues likely sound familiar - they’re part of our daily reality.

Then, the conversation took an unexpected turn.

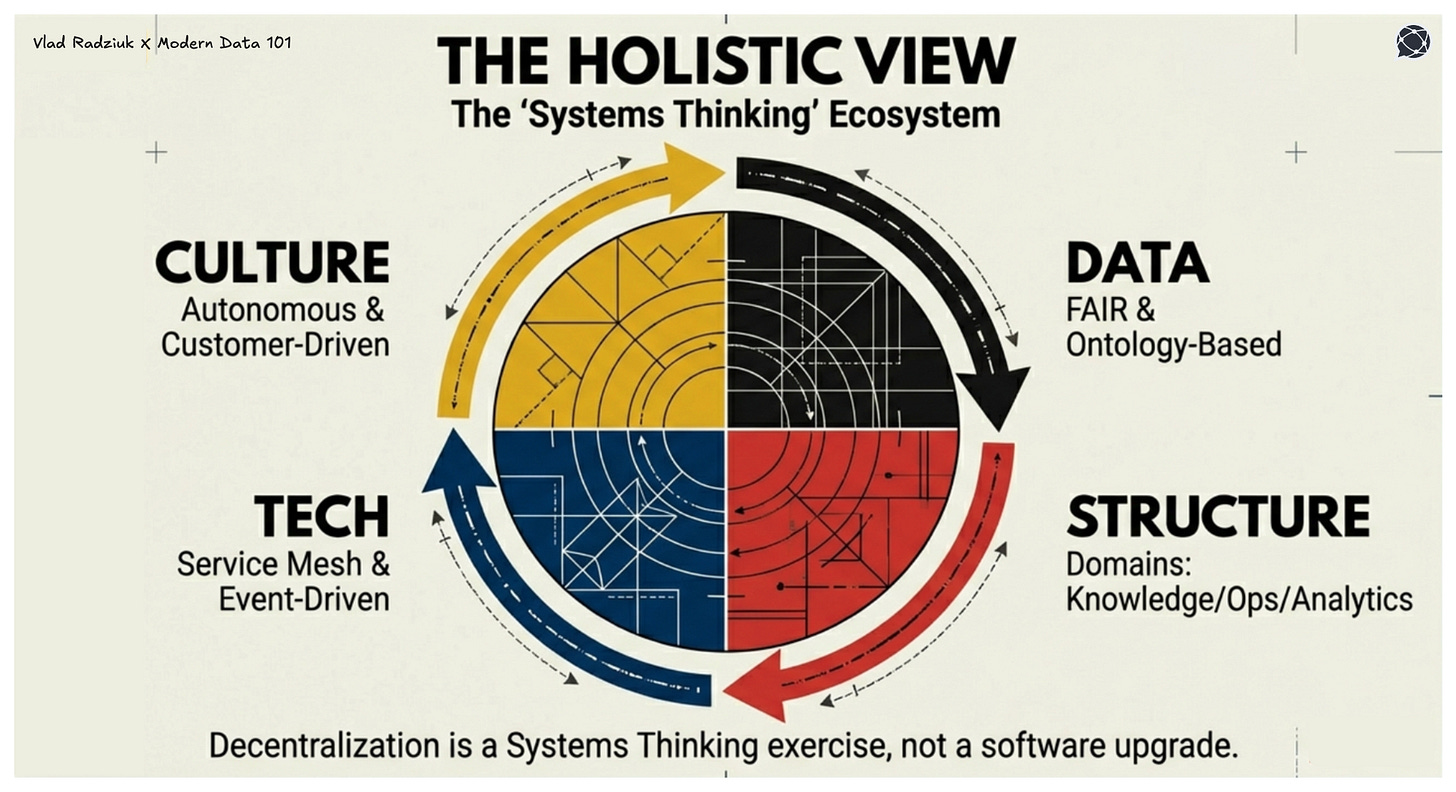

One architect emphasized the need for a holistic approach when transforming organizations toward a platform-based setup with federated governance and decentralized ownership. That’s when he dropped that line.

It was a powerful reminder for me: real-world systems are complex and interdependent. Inspired by this insight, I decided to outline a high-level playbook for developing systematic approaches to decentralized data management methodologies like data mesh.

This playbook will probably not be interesting for everyone, but if you’re looking to understand the broader context and make better decisions (or offer better advice) for your internal or external stakeholders, it’s worth stepping back and examining the bigger picture beyond just the data layer.

Reasoning behind the decentralization of organizations

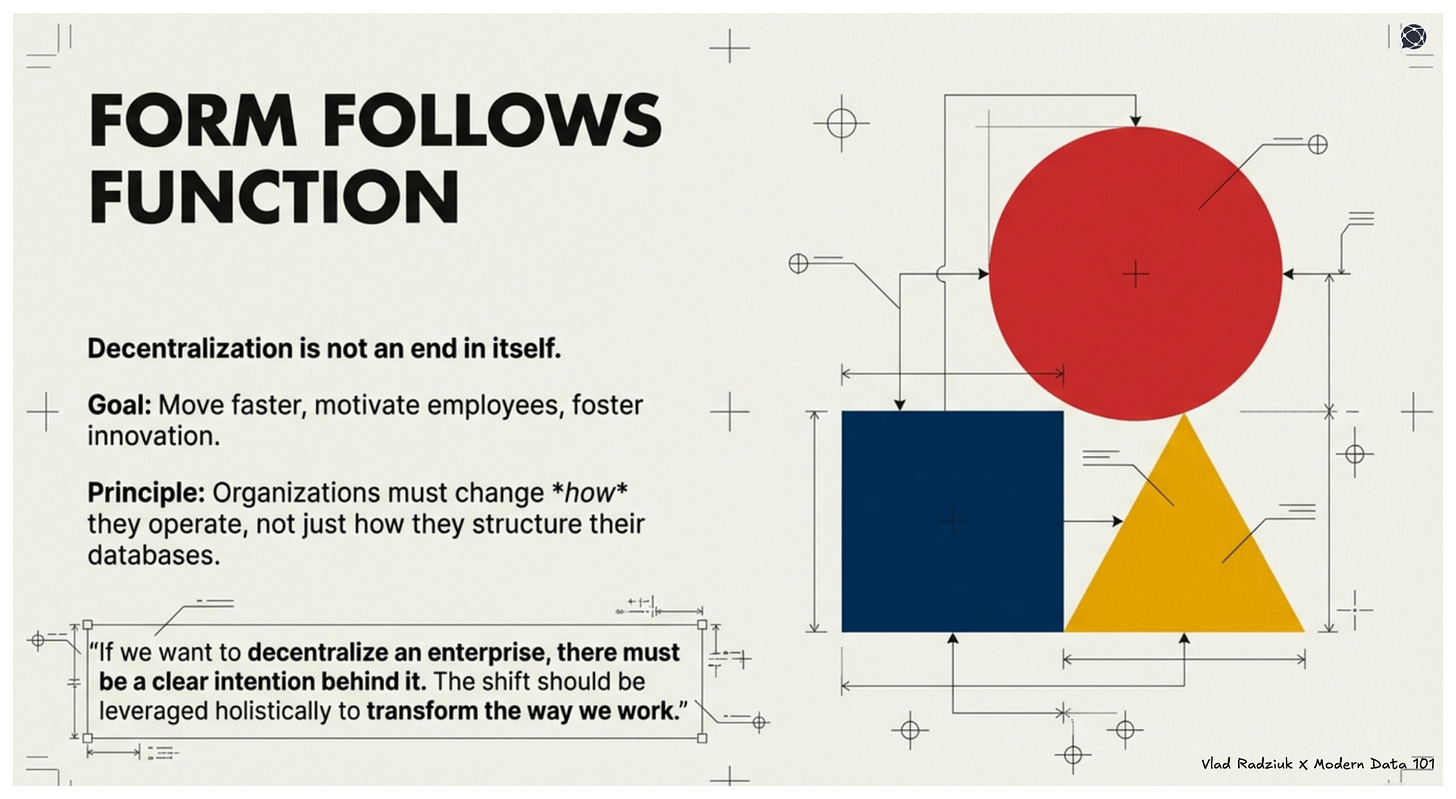

Decentralization, as the core idea of the data mesh methodology, isn’t an end in itself, but a means to drive better data quality and unlock greater value from it. But the question that occupies me is:

How can organizations maximize benefits from decentralization?

Theory suggests that decentralized organizations move faster, motivate employees more effectively, and foster innovation. My own experience confirms this. When an organization’s culture is focused on delivering customer value, having multiple centers of power can lead to remarkable outcomes.

For example, when I led a competence center for application development (operating outside the company’s traditional hierarchy), I was able to create new narratives, uncover new opportunities, and engage employees in ways that a top-down approach simply wouldn’t allow.

Another example that fascinated me recently

Local leaders in a multinational consulting firm, operating in a smaller market, developed a strategy to sustain growth even during years with a slower macroeconomy. They began serving local startups with attractive pricing, which gave them indirect access to venture capital networks and diversified their pipeline.

Many of us have seen similar success stories, but let’s be honest: often, these are driven by the enthusiasm of individuals rather than institutionalized decentralization. What interests me is how organizations can systematically enable decentralization at scale. What platforms, cultures, and organizational designs are needed to make this work?

As the Bauhaus movement taught us in design and architecture, “form follows function.”

Decentralization isn’t just about restructuring. It’s about how an organization operates. If we want to decentralize an enterprise, there must be a clear intention behind it. The shift should be leveraged holistically, not just to improve data value, but to transform the way we work.

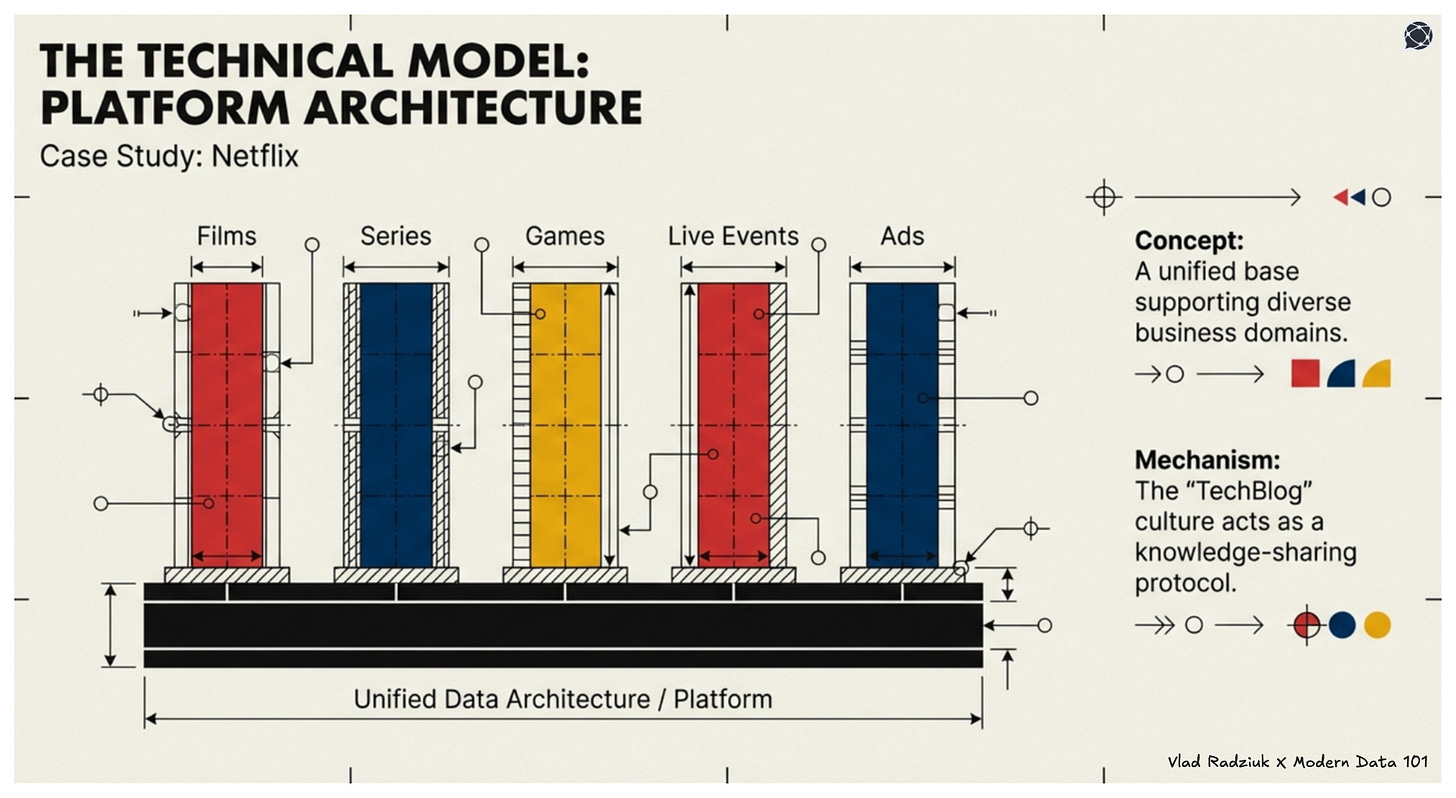

In terms of platforms, Netflix can serve as a poster child with their data mesh architecture, knowledge graph, and Unified Data Architecture, which support various domains serving different parts of its platform, including films, series, games, live events, and ads. They regularly post articles about their architecture in their engineering blog (Netflix TechBlog), which I highly recommend reading.

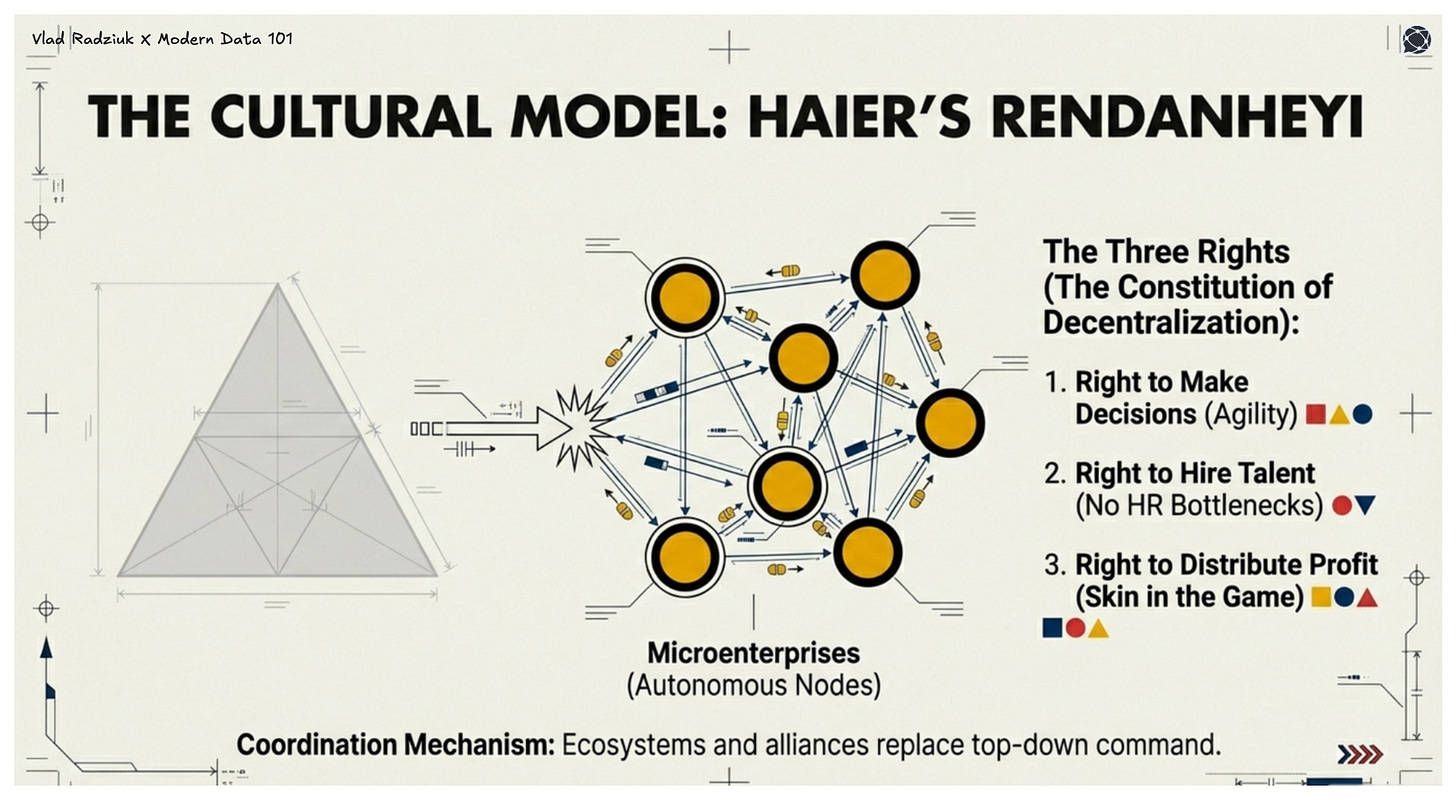

Culture-wise, there is one company that made decentralization its source of competitive advantage and became a role model: a Chinese player in the IoT area called Haier.

They developed a philosophy called RenDanHeYi, after which they gave autonomy to their business units (e.g., a unit producing refrigerators, or other kinds of home appliances), which started to serve as “microenterprises”.

Each of these internal companies got “three rights”:

the right to make independent decisions,

the right to hire talent and

the right to distribute profit

So they can quickly make decisions based on market changes, take responsibility for their own gains and losses, and share value in accordance with the agreement.

Instead of a centralized strategy, Haier’s approach is customer-driven: each team identifies real needs and builds solutions, and coordinates through ecosystems (e.g., “microenterprises” can form alliances to achieve bigger goals, and for higher-level strategy)

Haier still has some centralized institutions that fund high-risk, long-term bets. Although I could not find much about the internal technological setup of Haier, I still brought up this example to illustrate how important it is to think systematically when planning decentralization, and not to just focus on technology in general or data in particular.

Still, this piece is primarily for data professionals, so let’s go back to what we know best and deduce other aspects from our shared vision of the bright data future.

What does it actually mean for data to be FAIR?

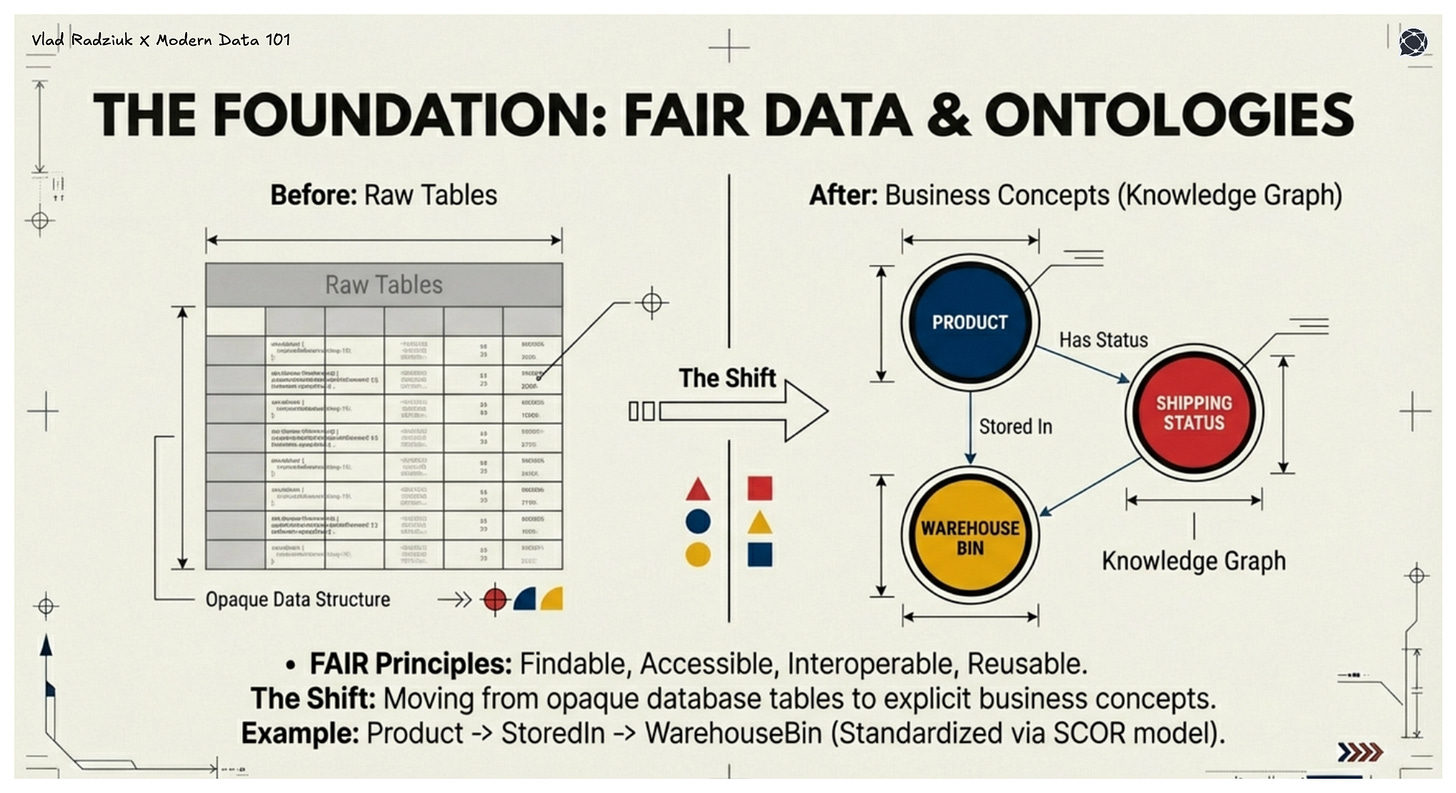

FAIR data (data that adheres to the principles of findability, accessibility, interoperability, and reusability) is a cornerstone of modern data management. For organizations aiming to implement FAIR, a data product culture (often supported by methodologies like data mesh) and explicit concept modeling (using ontologies) are essential.

This shift moves the focus from tables to business concepts: entities with clear definitions, data instances, and relationships to other concepts.

The FAIR principles provide a clear North Star for how data should be managed to deliver business value. Let’s consider logistics as an example. FAIR data would align with master data (locations, products, partners) and reference data (units of measure, currency codes).

Each data product or data point would have a persistent identifier (PID) or URI, enabling actions like scanning a pallet’s PID to instantly retrieve its contents, origin, and destination from a shared database.

Data would be fully described using knowledge languages or ontologies. For instance, a controlled vocabulary would standardize status terms like “Received,” “Picked,” or “Shipped” (aligned with the SCOR model), while relationships like “Product → StoredIn → WarehouseBin” would be explicitly defined.

The result? Both humans and AI-powered solutions can act directly on datasets, without ambiguity or the need for further interpretation.

I believe FAIR principles offer a powerful lens for examining the holistic decentralization of organizations.

🔖 Related Read(s)

Let’s imagine the shiny world of FAIR data: how would it look from the user's perspective? Users can find the data they need by typing, for example, concept names, synonyms, or related concepts into the search bar of a data catalog.

What’s even more compelling is that data is aggregated by business concepts, allowing users to navigate from concept to concept, much like on Wikipedia, where you might start with “black tea” and end up reading about Einstein’s biography.

In this case, however, users could search for a product, explore its sold instances, track shipping status, and receive recommendations for similar products. This seamless experience is made possible by combining complete domain ownership of data with federated governance.

But what does this mean when we step outside the data perspective?

Users traverse the enterprise network across domains. They don’t just access the knowledge space of different domains (e.g., by navigating a knowledge graph through a graphical interface, clicking on PIDs to move between concepts), but also can also consume data or access software functionality, provided they have the necessary permissions.

Domains own their assets, meaning they control business logic, access policies, and boundaries. In essence, they decide who can “visit” and interact with their data, much like setting the rules for guests in their own home.

Because domains fully own their business logic, the definitions of business concepts and processes can be traced: from knowledge bases and controlled vocabularies, through data, all the way to software.

Since domains provide their assets as products, they are responsible for the full lifecycle: maintenance, updates, monitoring, and beyond.

As you can see, decentralization, driven by data mesh and ontology-based data management, has far-reaching implications for how IT operates. The FAIR criteria don’t just guide data management. They also highlight what’s needed for domains to thrive in a decentralized environment.

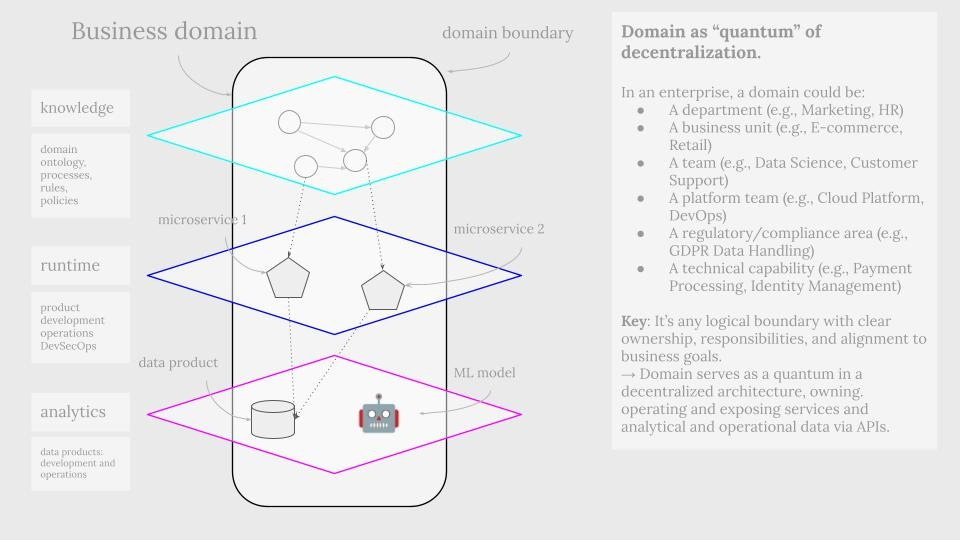

Domain as a quantum of decentralized organizations

Decentralization often means empowering domains, but what exactly is a domain? It could be a department, business unit, team, platform team, technical capability, or something else entirely. At its core, a domain is any logical boundary with clear ownership, responsibilities, and alignment to business goals.

In the context of data, a domain refers to an organizational unit that not only produces data but also owns it throughout its entire lifecycle, from creation to consumption. However, ownership doesn’t begin with the data itself. It starts earlier, with business logic and language, a principle well-captured by the domain-driven design (DDD) methodology.

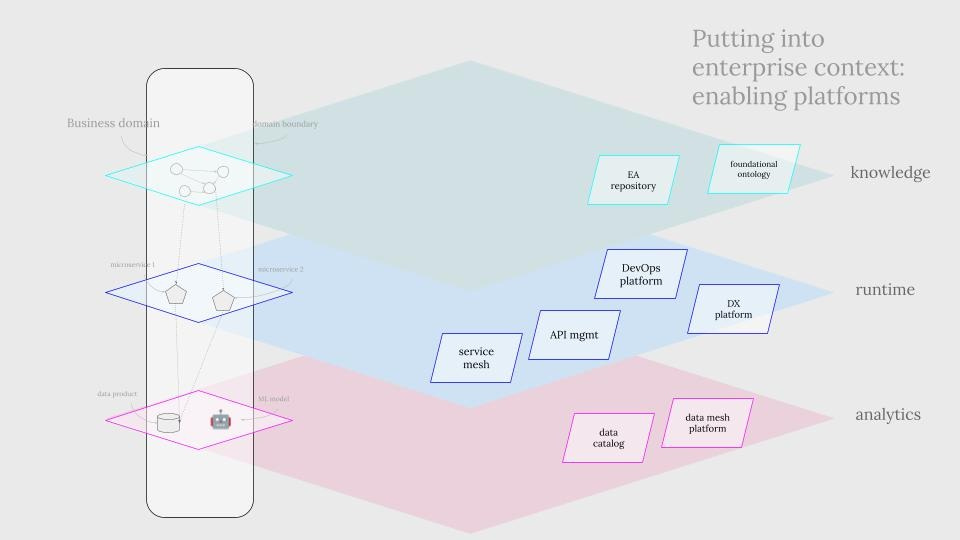

Domains have boundaries, which define their unique knowledge models, language, and rules (something we often call business logic). These boundaries extend beyond abstract concepts and include tangible assets that support domain operations, such as software and data. As a result, domains exist across multiple layers:

Knowledge layer: The conceptual world of definitions, rules, and language.

Operational layer: The runtime environment of applications, services, and microservices.

Analytical layer: The world of data products and insights.

To successfully decentralize an organization, all these layers must be addressed, with each playing a critical role in enabling autonomy, clarity, and alignment.

If we abstract away from data or software and focus instead on business capabilities, something interesting emerges: those capabilities remain consistent, whether viewed through an operational or analytical lens.

The language, definitions, and rules (in other words, the knowledge model) persist across all layers. This alignment means that software and data can be managed in surprisingly similar ways. And, fortunately, we already have the tools to do it.

API-first approach and communication over the web

First of all, in an ideal FAIR world, the way we write software is different from how enterprises did that for decades, as we push business logic towards knowledge models (ontologies) and data, away from software. As Martynas Jusevičius told recently:

“Ora Lassila has claimed that all non-RDF data is legacy data. I would go one step further and claim that all software not built natively on and for RDF is legacy software.” (source).

I find this point interesting, but I personally have yet to check what it actually means and to what contexts it applies. On the contrary, domain-driven design (DDD) is pretty widely adopted nowadays and delivers value by many means, with one of them being modeling the code after business concepts, not technical vocabulary.

Another interesting relationship between software and data is that FAIR ultimately requires data products following similar principles that software engineers seem to have widely adopted: modular development (microservices) and communication over HTTP APIs.

With data products being built like web services, you could apply the same observability pillars and access control mechanisms to them.

And then it also becomes clear why the architect mentioned at the beginning of the article said, “Data mesh without service mesh does not make sense”. Yes, this phrase was cut from the context. The guy worked at a digital-native company before joining a traditional enterprise, so for him, it is easy to judge.

Not all data products are built like web services, for a number of reasons, and a service mesh would not be ultimately necessary to start implementing a data mesh. But still, the connection between the two concepts does exist and should not be ignored.

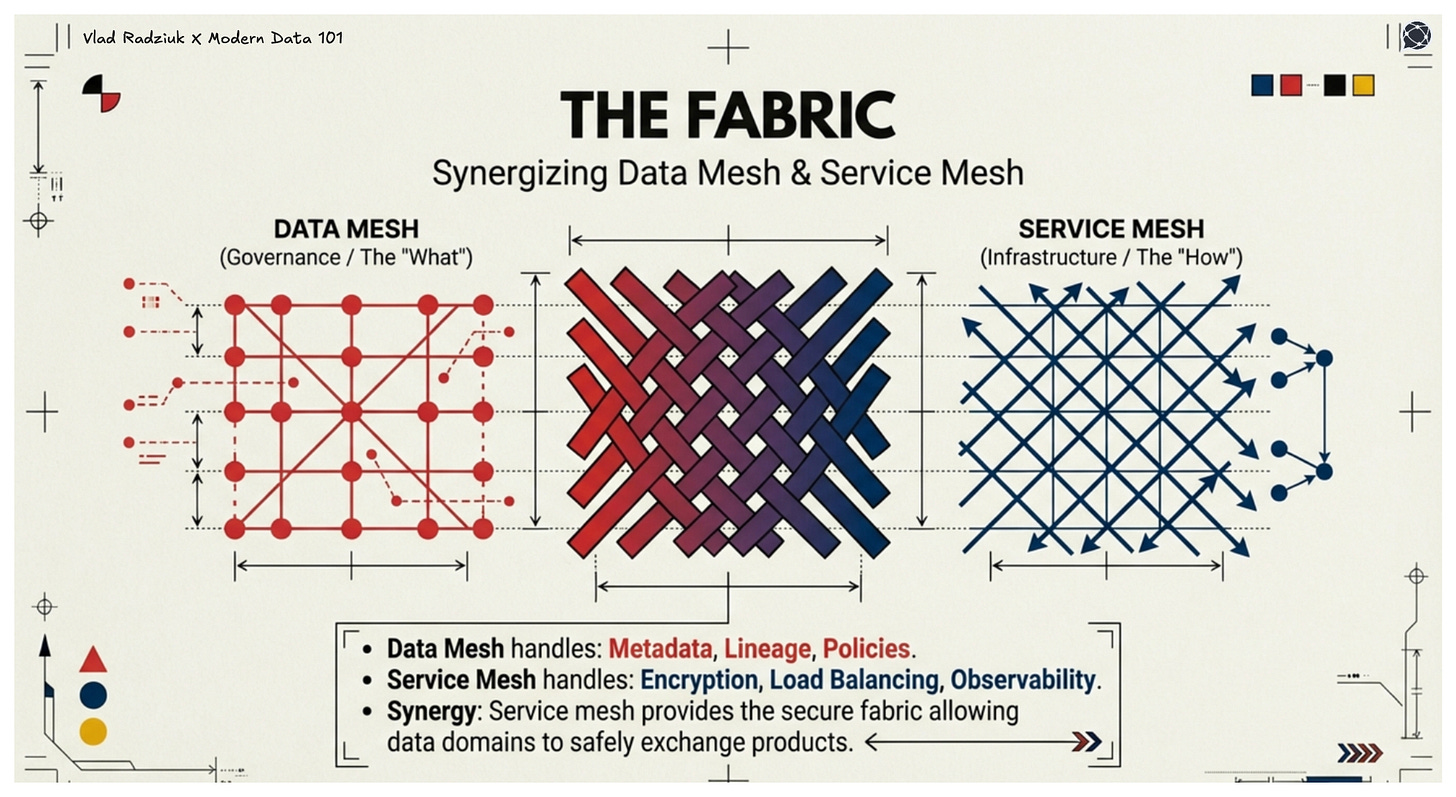

Data mesh tools focus on data-specific needs (metadata, lineage, access policies), while service mesh focuses on network-level concerns (encryption, load balancing, observability). One lives in the runtime world of microservices, the other in the organizational world of data domains.

Data mesh could rely on service mesh to provide the underlying networking and security fabric that connects domain-owned data products. Without service mesh, data mesh domains could struggle to enforce access controls, manage traffic, and ensure secure communication. On the contrary, service mesh ensures APIs feeding AI are reliable, secure, and observable.

Service mesh is not mandatory for all data products, but it would be quite helpful for those exposed as services.

With data being governed using the data mesh principles, with specialized tools and platforms supporting this, and with service mesh enabling services to talk to each other in a secure, observable way, it is easier to draw the technical boundaries between domains.

With that said, it is also important to mention that technology alone is not sufficient, as teams have to adopt the product mindset when it comes to both data and software. Handling them as a product means not only developing them, but also managing them along the whole lifecycle, including operations, monitoring, and so on.

Communication between the domains

It appears natural that different domains would like to communicate with each other: stages in a supply chain depend on each other, production facilities sometimes need to know the results of the R&D activities, and recommendation engine domain needs to pull historical data for past transactions of a customer.

While different parts of organizations would like to communicate on the architecture level, we would like to prevent tight coupling between domains: scenarios when components are highly dependent on each other, making them less flexible and harder to maintain.

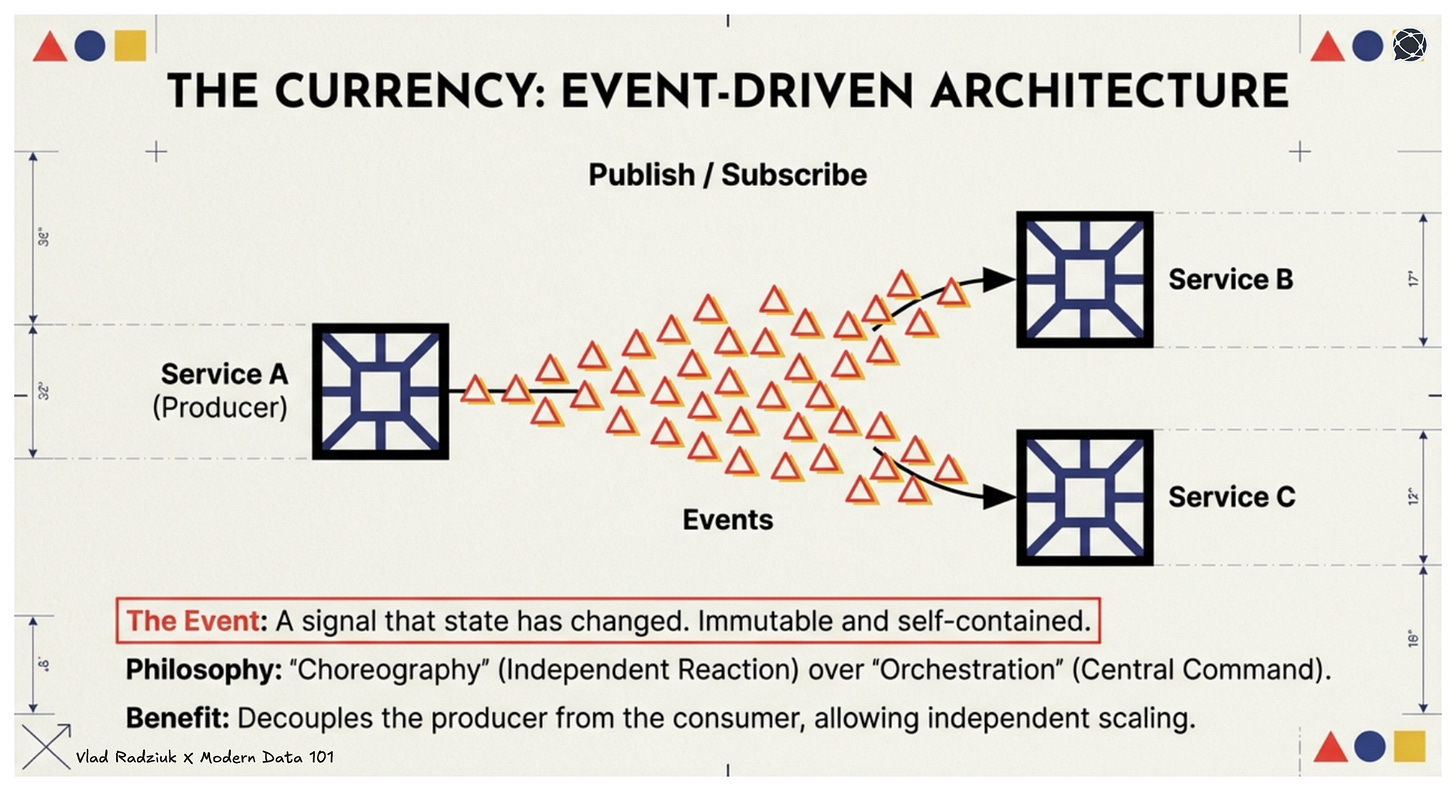

The problem of coupling has many aspects: technological (e.g., programming languages), data format (binary, Parquet, CSV), interaction style (async vs sync, REST vs. GraphQL), and many others. Event-driven architectures seem to be a good way to minimize the coupling.

What is actually an event?

As a quantum of event-driven architectures, it is a signal that the state of the system changed. One necessary property of it is that it is immutable. As a consequence of these two properties, in a decentralized architecture, an event can serve as a contract for integrating different systems.

Then producing and consuming domains can agree upfront on a schema of the events. What attributes would a good schema contain? At the very least, an event needs to contain notification about change.

But the more self-contained an event is, the more successful an integration can be. Thus, we would like to see not only the fact that something changed, but also the nature of the changes (what data was modified) and the context.

In the best case, a domain event should be stateless, immutable, modeled as close to the domain as possible, belong to one of the types from the controlled vocabulary/taxonomy, be scoped to a bounded context, and describe the intent behind the change.

Such an event can ensure interoperability and thus serve as a good “currency” in a decentralized architecture. Furthermore, an event with ID can help with deduplication.

Producing domains are not only responsible for adding context to their events, but also for versioning and ensuring backward compatibility of it (for some time),

while consuming domains must take ownership of consumer-related logic.

It is considered a good practice when producers know their consumers and do not give out secrets of their life on a wall at the central square - e.g., they can have a policy that only users with specified roles or keys can read the information in the event payload.

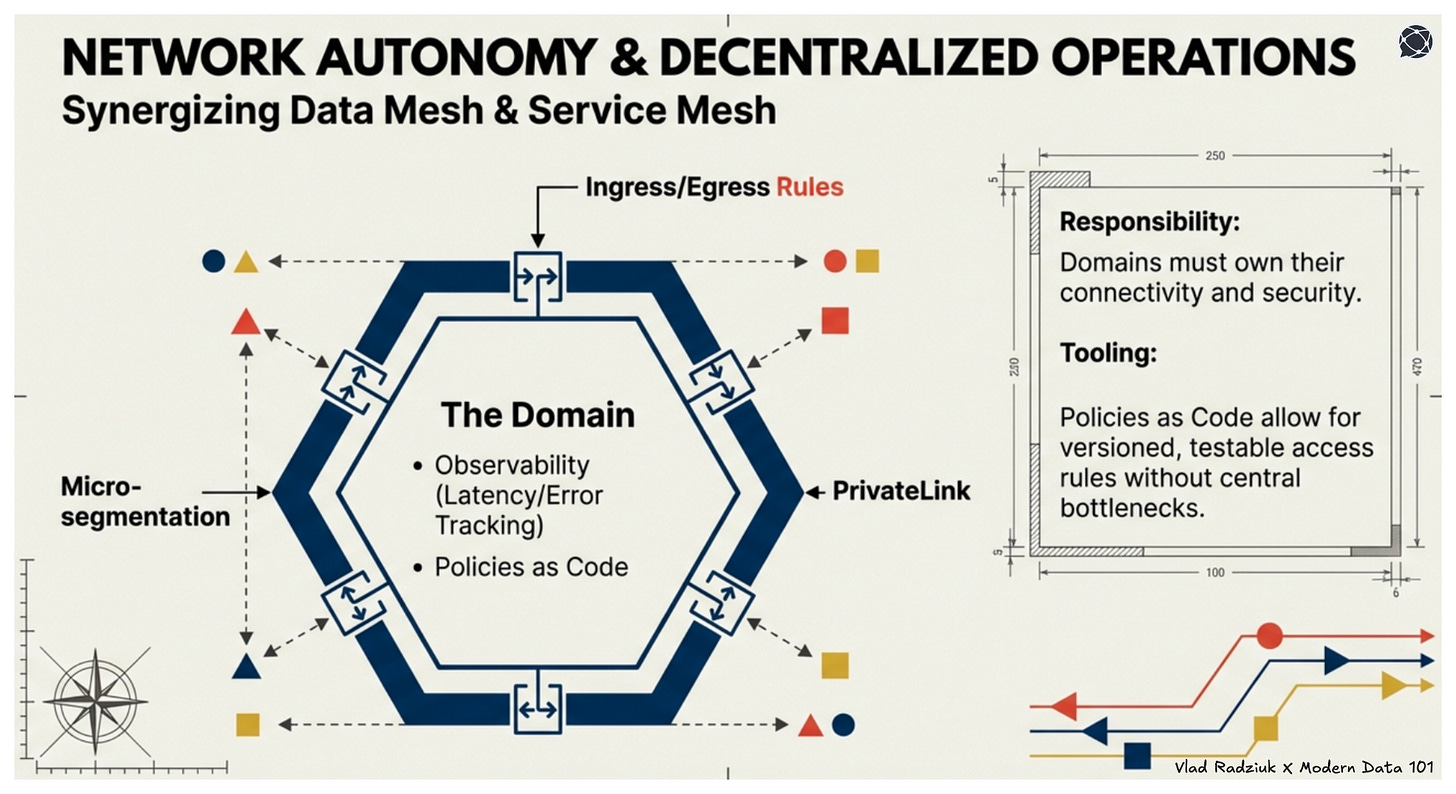

As communication between domains happens over networks, I think it makes sense to touch on some networking aspects, too. If domains are responsible for managing their own software, data assets, and their domain events, it follows naturally that they should also take on more responsibility for managing their network and communication streams.

Different domains may have unique requirements for security, latency, or compliance, making centralized control impractical.

While it’s unrealistic for domains to manage the entire networking infrastructure, they can realistically own their access policies and monitor their networks using modern observability tools.

Service mesh empowers domains to configure their own ingress/egress rules, retries, timeouts, and load balancing. With observability tools, domains can independently track latency, errors, and traffic - without relying on a central team.

Managing policies (e.g., access or firewall rules) as code enables versioning, testing, and deployment via CI/CD pipelines, ideally in a tool-agnostic way.

Boundaries between domains are enforced at two levels.

Thanks to the network isolation (isolated virtual networks and micro-segmentation) on one side and event-driven architecture on the other, we can decouple workloads and services, enabling independent scaling.

Instead of service A polling service B, service B emits events when changes occur, embodying a “choreography” mindset (vs. “orchestration”) and enabling service-level decentralization. Back on the network layer, tools like PrivateLink and enforced encryption for all inter-domain communication ensure secure connectivity between domains.

The difficult part: human behaviour and business architecture

When decentralizing organizations, you need a systematic approach that will touch different aspects of domain operations. In this text, I touched on the main principles, such as:

decentralized ownership over software, data, and network assets;

self-service platform operated by centralized platform teams;

cross-functional collaboration happening on multiple levels, especially in governance;

computational governance, policies as code, infrastructure as code, versioned resources and workflows;

API-first design;

event-driven architecture.

But as compelling as your approach may sound on paper, reality is far more challenging. You’ll face legacy cultures rooted in the industrial era, organizational inertia, and deep-seated biases.

You’ll need to strike the right balance between centralization and decentralization - one that aligns with your organization’s unique context. And you’ll have to prepare people for new roles, like data stewards, which require both technical and cultural shifts.

To be honest, this is more art than science.

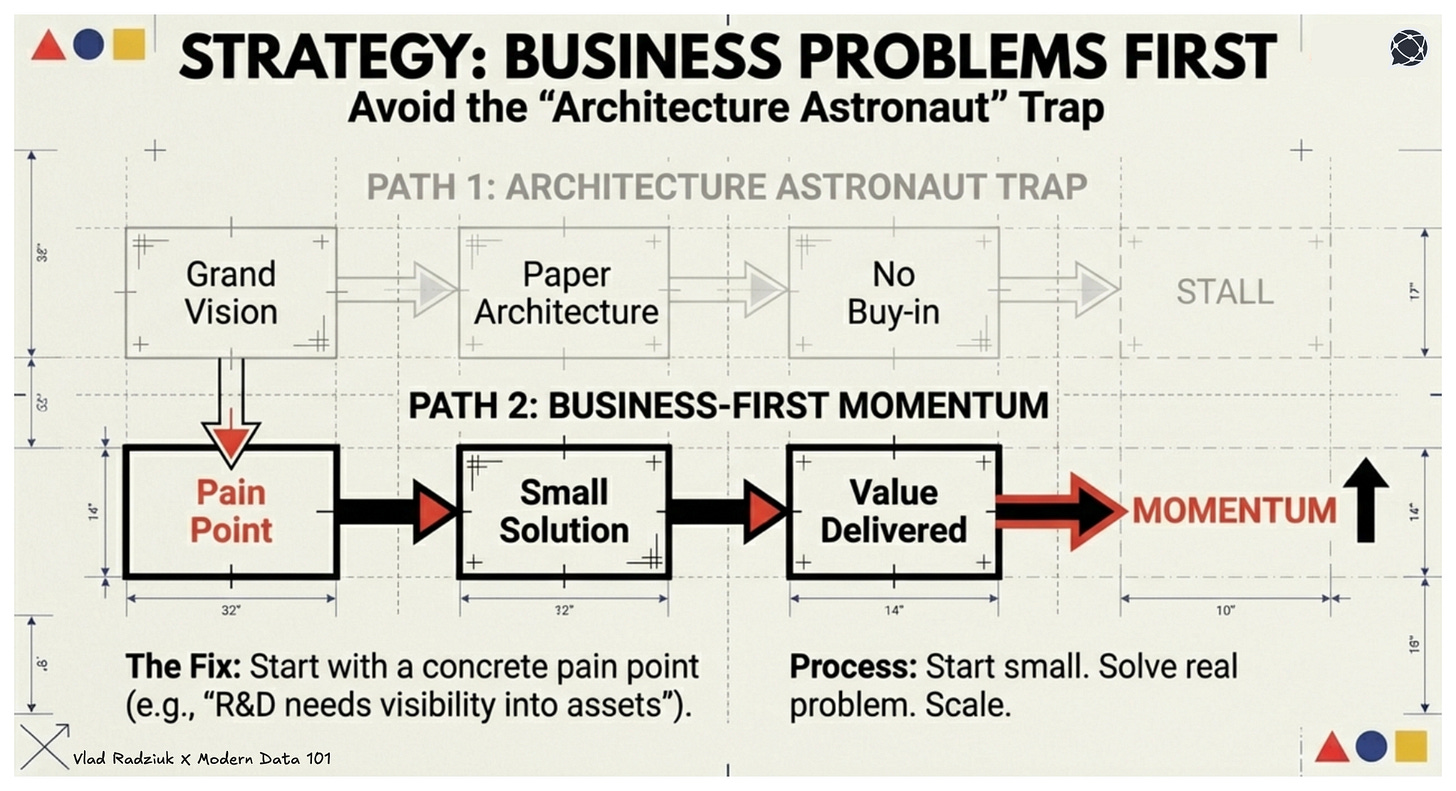

Start with a business problem.

I’ve seen many teams eager to improve how their IT organizations operate, confident that they have management buy-in. But too often, their plans were disconnected from real business problems.

Instead of addressing tangible pain points, they focused on crafting the perfect architecture (on paper). That’s not how transformative changes like decentralization work.

You can’t roll out a grand vision all at once.

You have to start with a specific, agreed-upon business problem - something concrete that management cares about. For example, instead of deploying an entire data mesh infrastructure upfront, you might begin with a data catalog to help the R&D team gain visibility into the company’s data assets.

This approach achieves two critical things: real buy-in (when your architecture solves a specific, urgent problem, stakeholders see its value immediately) and purpose (real problems provide a clear sense of direction: something abstract architecture diagrams often lack). Start small, solve a real problem, and build momentum from there. That’s how meaningful change happens.

Search for early adopters, build alliances.

In any organization, even the quietest ones, there are always people who are eager to learn and try out new things.

Search for them: only when you work with engaged people, is there a chance that your initiative will succeed.

I covered the topic of engaging employees in another article of mine: Every employee is a superhero - when they understand the economics of their jobs | LinkedIn Also don’t be afraid of building alliances across the company to ensure the success of your initiative. Again, the goal is to find people who, for some reasons, are interested in your version of the future.

Decentralization is primarily a business endeavour, not technical one.

I described the case of Haier, which reshaped its whole operational model in a decentralized spirit.

Decentralization is ultimately about building capabilities and shifting the authority closer to where the problems are solved.

Authority allows the domain to make decisions closer to the outside world, to customers. But they also have to have the right capabilities to support their judgment. One cannot work without the other. And what is most important is that only a culture built on trust, on a positive perception of humans, can allow for a true decentralization, resulting in better decisions.

When I was writing this piece, Bjørn Broum published a text where he distinguishes between delivery-centric vs. value-centric organizations. While the first ones aim at predictable outcomes and controlled workflows, the latter, which remind me of classical Japanese management practices: aim to grow value over time by continuously improving themselves.

But this learning can only happen close to where the problems emerge. Which, in its turn, requires having true ownership over one's own workflows and assets. Thus, by focusing on the technical side, we risk again hoping for a magic tool (or self-service platform) to solve our problems. But as it usually is, we need to do some homework on the organizational level first.

MD101 Connect ☎️

If you have any queries about the piece, feel free to connect with the author(s). Or connect with the MD101 team directly at community@moderndata101.com 🧡

Author Connect 💬

Connect with Vlad on LinkedIn 💬

From MD101 team 🧡

The Data Product Playbook

Here’s your own copy of the Actionable Data Product Playbook. With 3500+ downloads so far and quality feedback, we are thrilled with the response to this 6-week guide we’ve built with industry experts and practitioners. Stay tuned to moderndata101.com for more actionable resources from us!

Hi Vlad,

Thank you for mentioning my work. I appreciate that.

The thread you trace from domain ownership through to culture and trust is what really makes the piece stand out. The service mesh vs. data mesh distinction captures exactly the kind of systems thinking that gets lost when discussions stay too focused on the data layer.

The connection to Japanese management practices is well placed too. The delivery-centric vs. value-centric framing maps naturally onto the tension between optimizing for control and optimizing for learning, and you make a strong case that genuine decentralization requires more than tooling.

Looking forward to following your work.

Bjørn