Why Leaders Can't Delegate Judgment to Systems: Where Accountability Exists

Critical necessity of maintaining human accountability within increasingly automated environments

About Our Contributing Expert

Arun Gamidi | Enterprise Data & Analytics Leader

Arun Gamidi believes most data initiatives fail not because of bad technology, but because they never connect to the decisions that actually matter. A data and analytics leader with deep experience in financial services, Arun has spent his career building and scaling data ecosystems in high-stakes enterprise environments where the cost of unclear decisions is real.

His work is grounded in a decision-first philosophy: data and AI create value only when they clarify the choices leaders must make. His framework, DATA by Design (Direction, Alignment, Trust, and Activation), gives organisations a repeatable path to align data initiatives with business goals, build trust in their information, and ensure insights reach the moment of decision.

Arun currently leads Enterprise Data & Analytics at MGIC. Through that work and his weekly Leadergence newsletter, his focus is on equipping data leaders to operate with confidence, not just access, in an AI-accelerated world. Readers can follow his ongoing thinking at Leadergence on LinkedIn. We're thrilled to feature his insights on Modern Data 101!

We actively collaborate with data experts to bring the best resources to a 20,000+ strong community of data leaders and practitioners. If you have something to share, reach out!

🫴🏻 Share your ideas and work: community@moderndata101.com

*Note: Opinions expressed in contributions are not our own and are only curated by us for broader access and discussion. All submissions are vetted for quality & relevance. We keep it information-first and do not support any promotions, paid or otherwise.

Let’s Dive In

Every decision has a consequence. And every consequence has an owner. But systems do not experience consequences. Neither can they own “consequences.”

They do not carry the weight of a wrong call or the satisfaction of a right one. That capacity belongs entirely to people. This is not a limitation of current technology, but a structural reality that more advanced systems will not change.

When something goes wrong, no one asks the model what it would do differently. They ask the leader responsible for the project or the model owner running the algorithm. That question has always pointed in the same direction, and it always will.

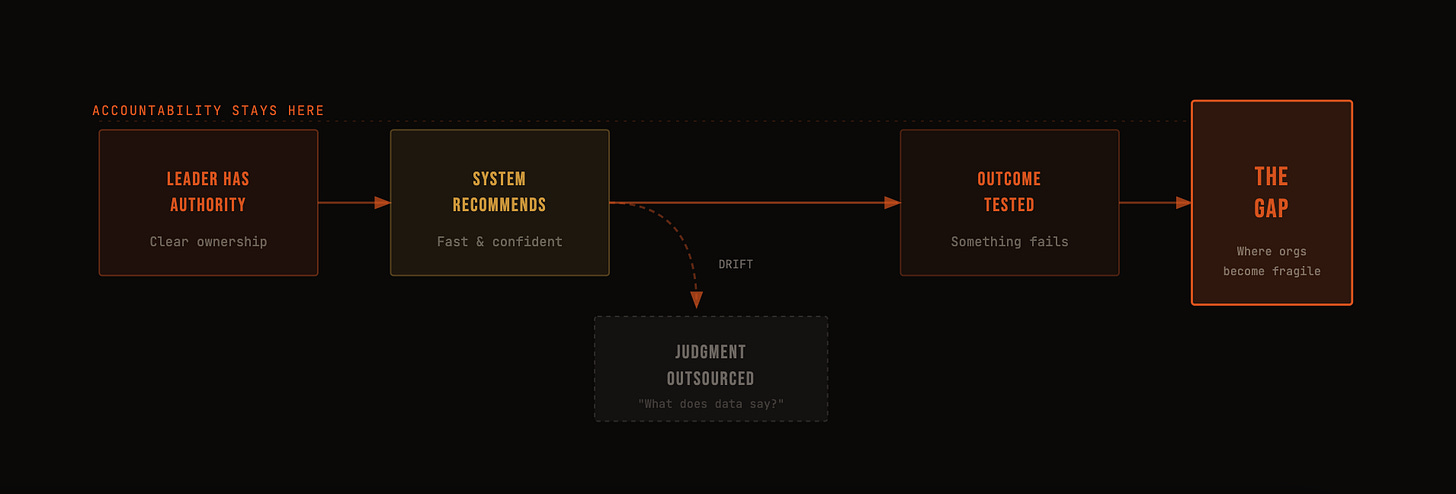

The Problem: Accountability Drift

No leader decides one morning to stop being accountable. It happens gradually in the space between a recommendation arriving and a decision being made.

Systems are fast, and they are confident. They surface answers before the question has fully formed. That speed creates a pull, and most leaders do not notice they are following it.

The question shifts. It moves from “What am I responsible for deciding?” to “What does the data say?” That may look like diligence, but in practice, it is the beginning of distancing ourselves from the solution and further from the end users.

The problem is not bad intent or negligence, but drift. And drift is harder to catch than negligence because it never announces itself. It accumulates over time and compounds in impact and effect.

Accountability does not follow that drift: it does not reassign itself to the system that produced the insight or the model that ranked the options. It stays exactly where it was- with the person who had the authority to decide. That gap, between where judgment drifted and where accountability remained, is where organisations become fragile.

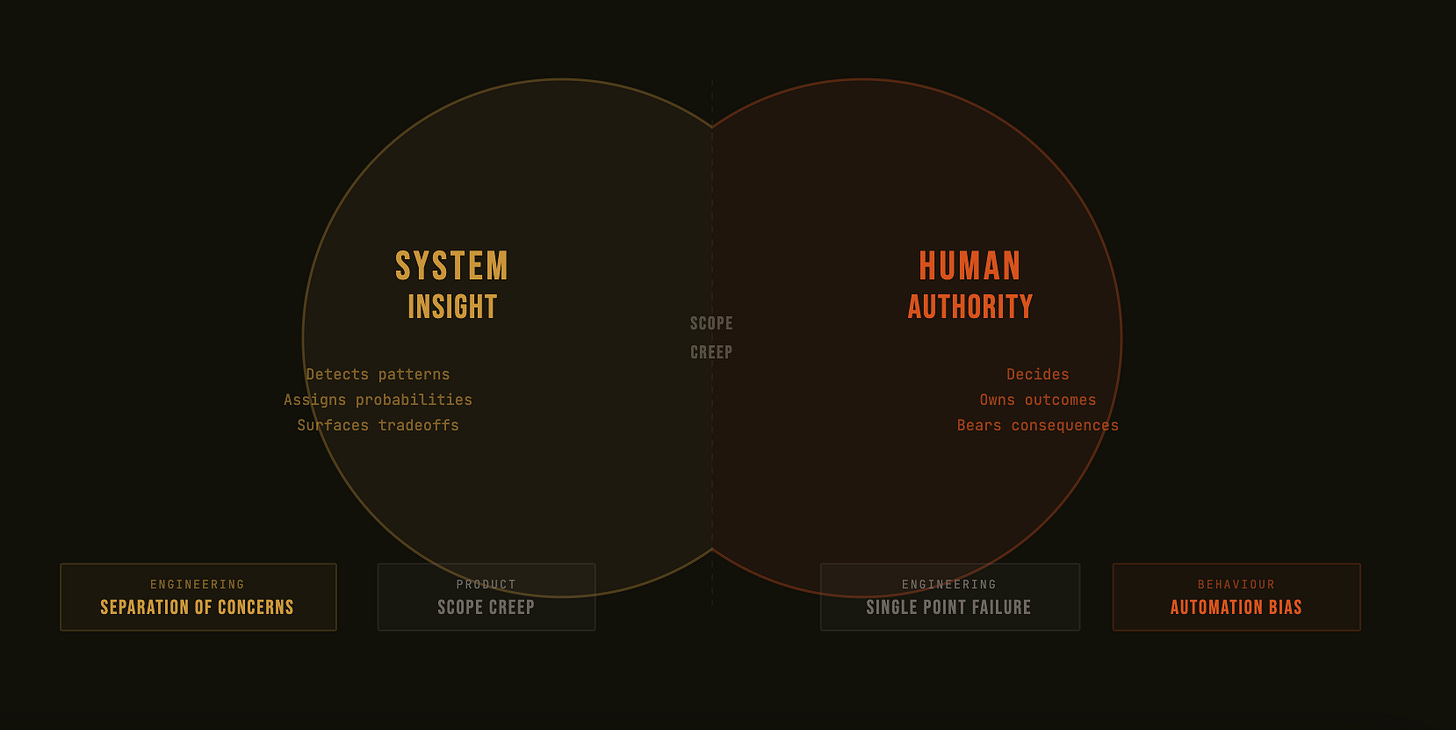

The Core Tension Between Machine Autonomy and Authority/Ownership

Separation of Concerns

Software has a concept called separation of concerns. The idea is simple: each component should do one thing, and do it well. When components try to do too much, or when their responsibilities bleed into each other, the system becomes fragile. The same principle applies to how organisations use systems to make decisions.

Systems are built for a specific job. They:

Detect patterns in data that humans cannot process at scale.

Assign probabilities to outcomes

Surface trade-offs that would otherwise stay hidden.

These are all precise and valuable functions. It is not the same function as deciding, and it was never designed to be. This is a feature of systems instead of hard flaws. In product design, a well-scoped tool that does its job cleanly is more valuable than an overloaded one that does many things poorly.

The problem is not what systems are built to do, but what organisations ask them to become.

Scope Creep

There is a concept in product thinking called scope creep. It begins when a tool that solves one problem is gradually asked to solve adjacent ones it was not designed for.

Each extension feels reasonable in isolation. Collectively, they produce something no one fully understands, and no one fully owns.

Insight and authority are not the same thing. Insight is an input which informs, surfaces, and recommends. Authority is a commitment which decides, owns, and answers for outcomes.

When organisations stop maintaining that distinction, the system is asked to carry weight it was never architected to hold.

Single Point of Failure

In engineering, the concept of the single point of failure is prevalent. It occurs when too much depends on one component that was not built to bear that load. Over-reliance on system recommendations creates a different but related failure mode.

Not a single point of failure, but a single point of diffusion. Responsibility spreads so evenly across dashboards, models, and outputs that it effectively disappears. Everyone referenced the system, but no one owned the call.

This is where the confusion between insight and authority does its real damage. It does not show up immediately in outcomes. It shows up first in behaviour.

Decisions slow down or move forward without anyone truly claiming them. The system becomes a shield protecting bad actors and actions as much as a tool.

And when outcomes eventually disappoint, the post-mortem reveals the same gap every time. The data was there, and the recommendation was clear, but the ownership was not.

Accountability had blurred long before anything failed, because no one had maintained the boundary between what the system was built to do and what only a person can do.

Systems are not accountable. They are not designed to be. Ownership is a human function, and no increase in model capability or self-governance changes that. The tension is not between humans and technology.

The Three Requirements for Leaders

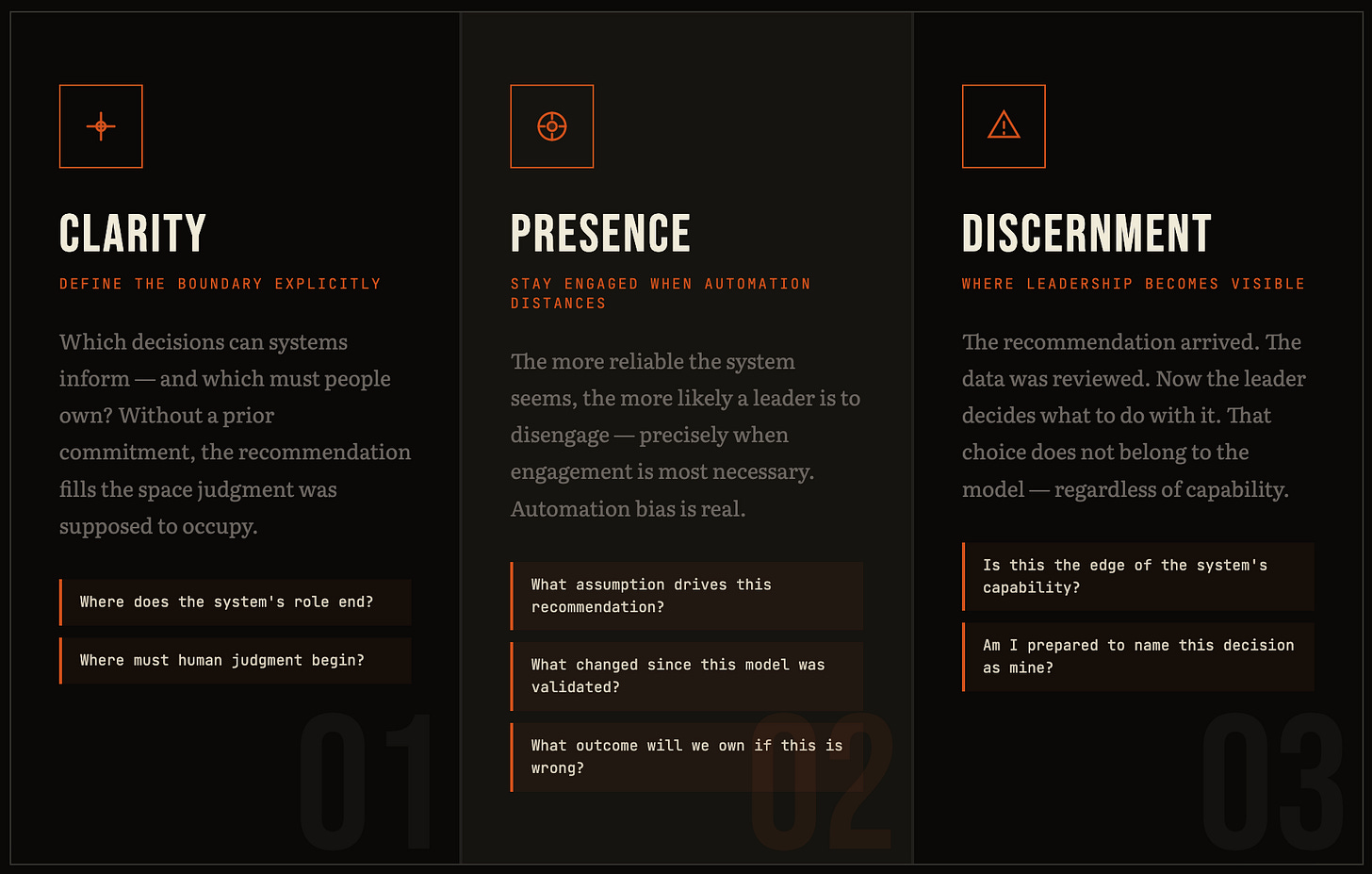

Systems do not close the gap between insight and accountability. Leaders do, and they do it through three specific capacities, each one distinct, each one dependent on the others.

Clarity: Define the Boundary Explicitly

In software systems, when two components interact without a clear contract, each assumes the other is handling something neither actually is. The result is a subtle and soft gap where responsibility falls through. Leadership inside data-driven organisations has the same problem.

Clarity begins with a question leaders rarely ask explicitly. Which decisions can systems inform, and which decisions must people own? Those are not the same category, and treating them as interchangeable is where accountability begins to break down.

The boundary matters because data does not make it visible on its own. A recommendation arrives with confidence along with a probability attached. The output looks complete.

Without a prior commitment about where the system’s role ends and the leader’s role begins, the recommendation fills the space that judgment was supposed to occupy.

More data does not resolve this, but compounds it, in fact. When information is abundant and confidence intervals are tight, it becomes easier to treat insight as a decision. And that emotion holds until outcomes are tested.

Then the gap becomes visible, and so does the absence of ownership. The boundary has to be defined before the recommendation arrives, not after it is challenged.

Presence: Stay Engaged When Automation Creates Distance

There is a pattern in product development called automation bias. It describes the tendency of users to over-trust automated outputs, especially when the system appears competent, and the feedback loop is slow.

The more reliable the system seems, the more likely a person is to disengage from the decision it is supporting. Leaders are not exempt from this pattern.

As systems scale, the work arrives pre-processed. Inputs are summarised, options are ranked, and outputs feel partially complete before any human speaks. That is the design working as intended. It is also where presence becomes harder to maintain and more necessary than ever.

Presence is not scepticism for its own sake. It is not slowing down every recommendation or second-guessing every model. It is staying connected to the consequences that the system is not built to handle. It sounds like specific questions.

What assumption is driving this recommendation?

What has changed in the environment since this model was last validated?

What outcome are we prepared to own if this is wrong?

Those questions are the mechanism by which leaders remain accountable. When leaders disengage because the system appears confident, accountability does not transfer to the system. It simply goes unclaimed. And unclaimed accountability surfaces in outcomes, long after the moment to exercise judgment has passed.

Discernment: The Moment Leadership Becomes Visible

In product design, the most important interface is the one that handles edge cases, exceptions, and moments where the expected path breaks down. That is where design reveals its actual quality.

Leadership is no different.

Discernment is what happens at the edge of the system’s capability. It is the moment when a recommendation has arrived, the data has been reviewed, and the leader must decide what to do with it. That choice does not belong to the model.

This is not the failure of automation. Automation did its job by processing the inputs, applying the weights, and surfacing an output. What it cannot do is bear the consequence of acting on that output. That responsibility does not transfer, regardless of how capable the system becomes or how confident the recommendation appears.

Discernment is clarity and presence made actionable.

Organisational Maturity Is Measured by Explicitness

The Greeks did not fear powerful tools, but feared them in the hands of people who had not yet earned the wisdom to use them.

That is the through line in nearly every myth worth studying. Icarus did not fall because the wings failed. He fell because no one had clearly established where the boundary was, and he had not internalised why it existed.

It is possible to fly too close to the sun when it comes to AI and machine autonomy. Organisations have already been burned terribly and 95% of pilots failing is a testament to that.

Organisations make the mistake of measuring maturity by capability. By how sophisticated the models are, how fast the dashboards update, and how many decisions the system can support in a given quarter. That is measuring the wings, not the judgment of the person wearing them.

The epics and modern-day case studies alike tell us again and again that the gift and the responsibility are inseparable. One without the other is not a blessing, but a liability.

The question is never whether the tools are powerful enough. At this point in time, technology has never produced more powerful tools, especially in the hands of the masses. The question is whether the humans using them have been explicit about what they are responsible for and if there is a framework to guide that behaviour. Where human judgment must remain decisive and cannot be delegated to a model, regardless of its accuracy.

Maturity is not measured by how advanced the tools are. It is measured by how explicitly leaders answer three questions before the recommendation arrives, before the outcome is tested, and before accountability needs to be assigned.

Who decides

Who owns what follows

And where does human judgment remain the final word

When those answers are clear, the organisation uses its tools better.

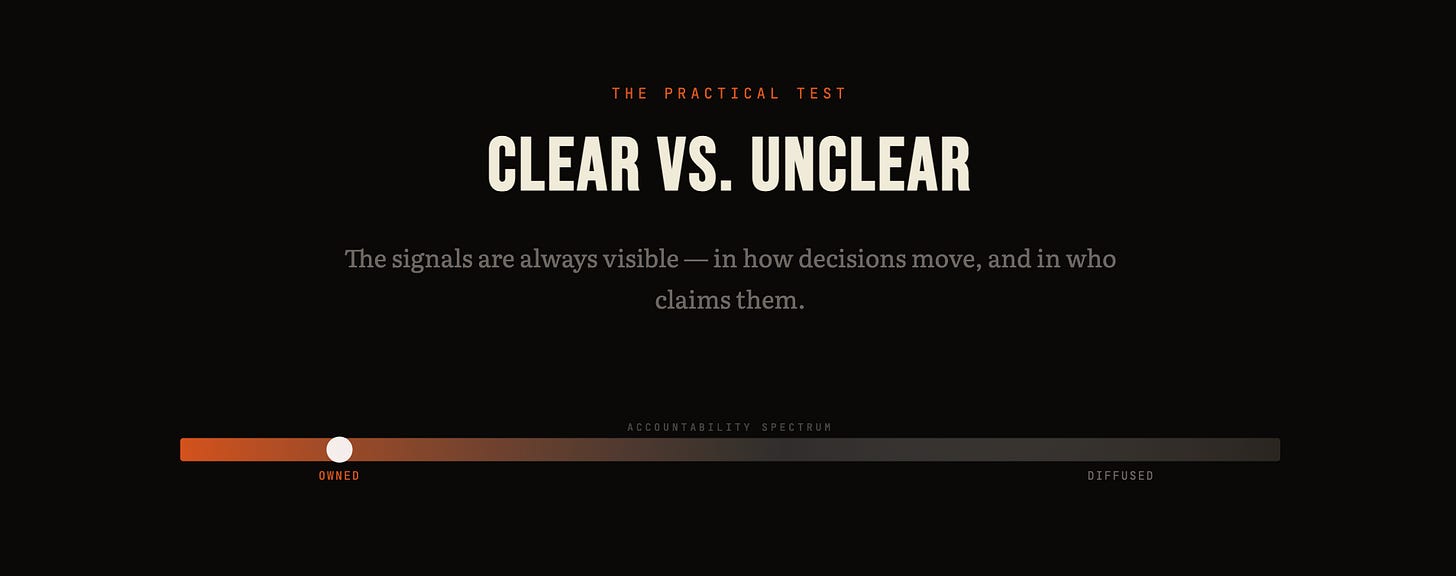

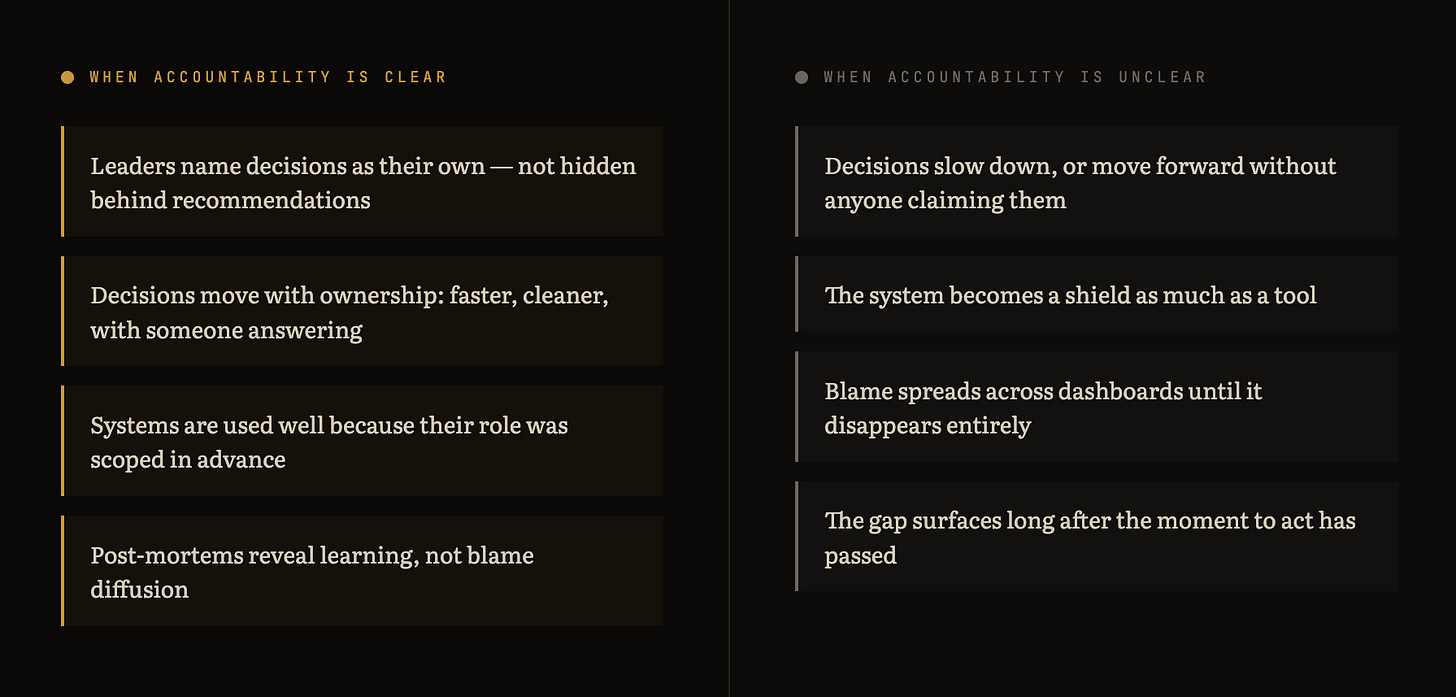

The Practical Test

When accountability is clear: leaders don’t hide behind recommendations; decisions move with ownership

When accountability is unclear: hesitation, blame, diffusion of responsibility

This is a leadership design problem, not a technology problem

The Question Worth Carrying

Where does judgment stop being computational and start being mine?

That question is what keeps accountability from drifting, presence from fading, and discernment from being quietly outsourced to a recommendation that arrived with confidence but carries no consequences.

Leaders who carry that question become more effective as systems grow more capable because they remain clear about what the system is doing and what they are doing.

Confidence does not come from certainty, from a tighter model, or a more reliable dashboard. It comes from knowing what you are responsible for and being willing to say so out loud. That willingness, to name the decision as yours, to own what follows, is not a soft skill sitting at the edge of leadership but the centre of it.

MD101 Connect ☎️

If you have any queries about the piece, feel free to connect with the author(s). Or connect with the MD101 team directly at community@moderndata101.com 🧡

Author Connect

Connect with Arun Gamidi on LinkedIn 💬

From MD101 team 🧡

The Data Product Playbook

Here’s your own copy of the Actionable Data Product Playbook. With 3500+ downloads so far and quality feedback, we are thrilled with the response to this 6-week guide we’ve built with industry experts and practitioners. Stay tuned to moderndata101.com for more actionable resources from us!