Architectural Standards for Data Products and AI Interactions: Emergent & Aligned Patterns

The Emergence of Unified Standards: From MCPs and Data Products to Unified Data Platforms. Unifying Purpose, Platform, and Protocol to Scale Intelligence

Adapted from concepts shared by Author(s) | Curated by Modern Data 101

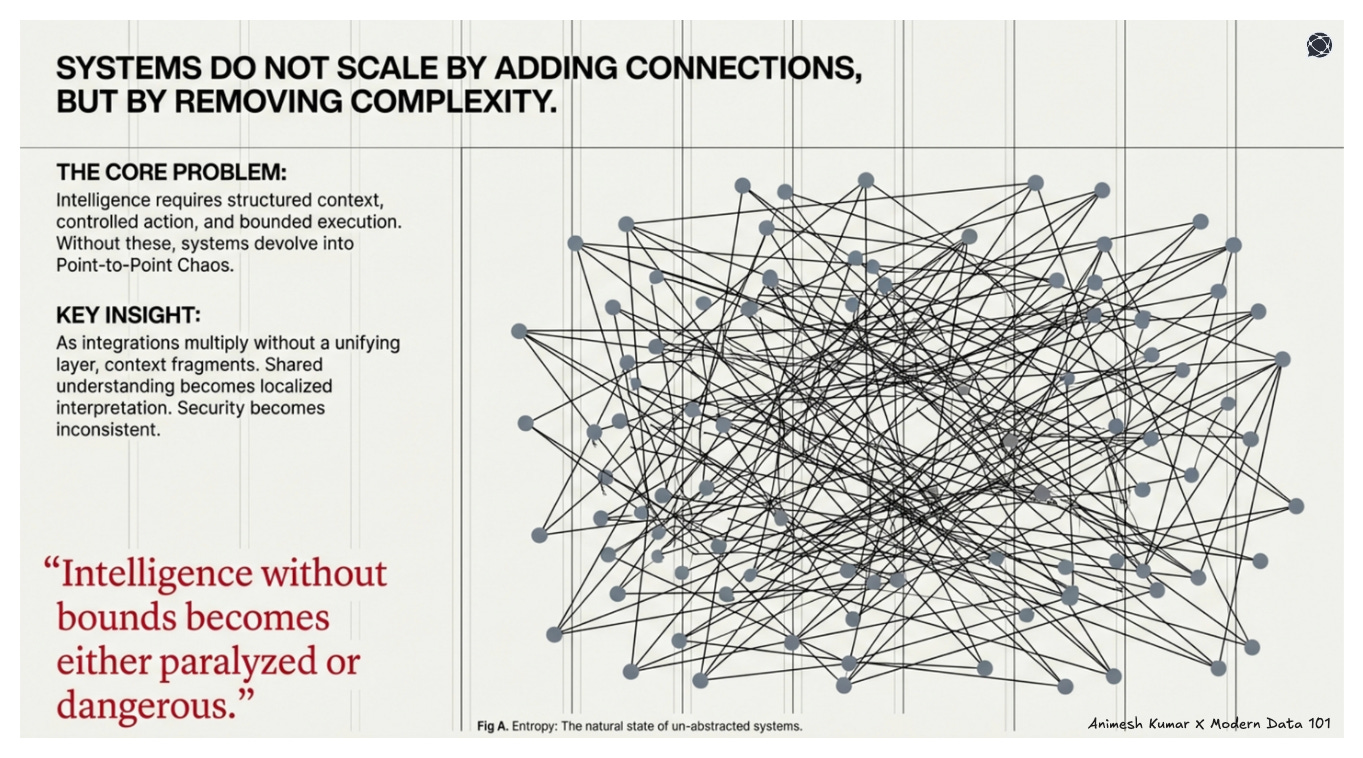

Why Unified Standards Emerged

Before we speak of protocols, platforms, or products, we must reduce the system to its irreducible parts. Strip away branding, tooling, and terminology, and what remains is intelligence attempting to act in an environment.

Intelligence, by definition, operates on context. Remove structured context, and the model substitutes probability for truth. But context alone is insufficient. Intelligence must also have a defined surface through which it can act.

And even action requires constraint. Without that, intelligence becomes either paralysed or dangerous.

So any system that enables AI must solve three things:

structured context,

controlled action, and

bounded execution.

Systems Scale Only Through Abstraction

Systems do not scale by adding connections but by removing complexity. And complexity is removed only through abstraction.

When integrations multiply without a unifying layer, the architecture begins to decay. Point-to-point connections proliferate until the system resembles a fragile web of dependencies rather than a coherent structure.

As this happens, context fragments. Each integration carries its own assumptions, schema, and semantics. What was once a shared understanding becomes a localised interpretation. Security follows the same pattern. Without a standard interface, every connection defines its own authentication model, permission logic, and failure modes.

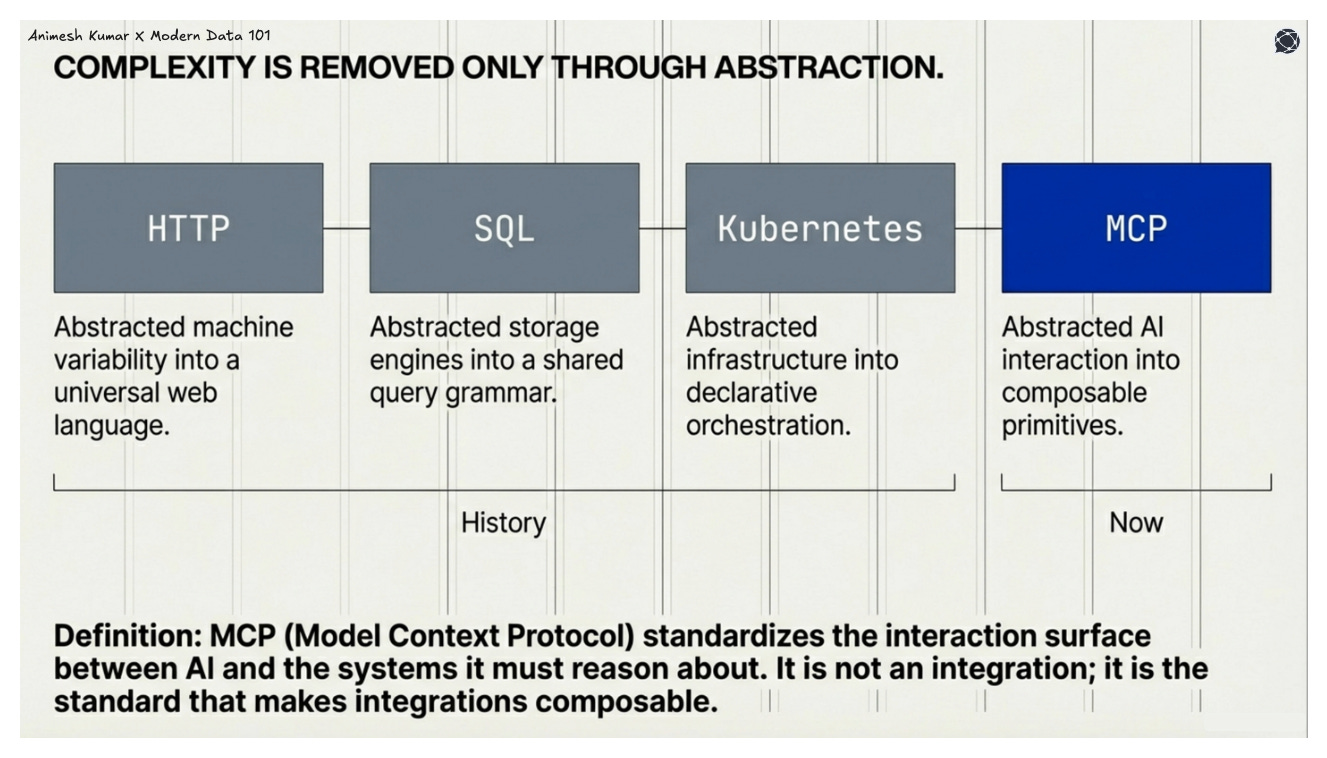

Historically, the escape from this entropy has always been the same: standardised interfaces that abstract variability.

HTTP abstracted the variability of machines into a universal language of web communication.

SQL abstracted storage engines into a shared grammar for querying data.

Kubernetes abstracted infrastructure into declarative orchestration.

MCP (Model Context Protocol) performs the same move for intelligence. It standardises the interaction surface between AI and the systems it must reason about and act upon. It is not another integration, but the abstraction that makes integrations composable.

MCP as a Unified Standard

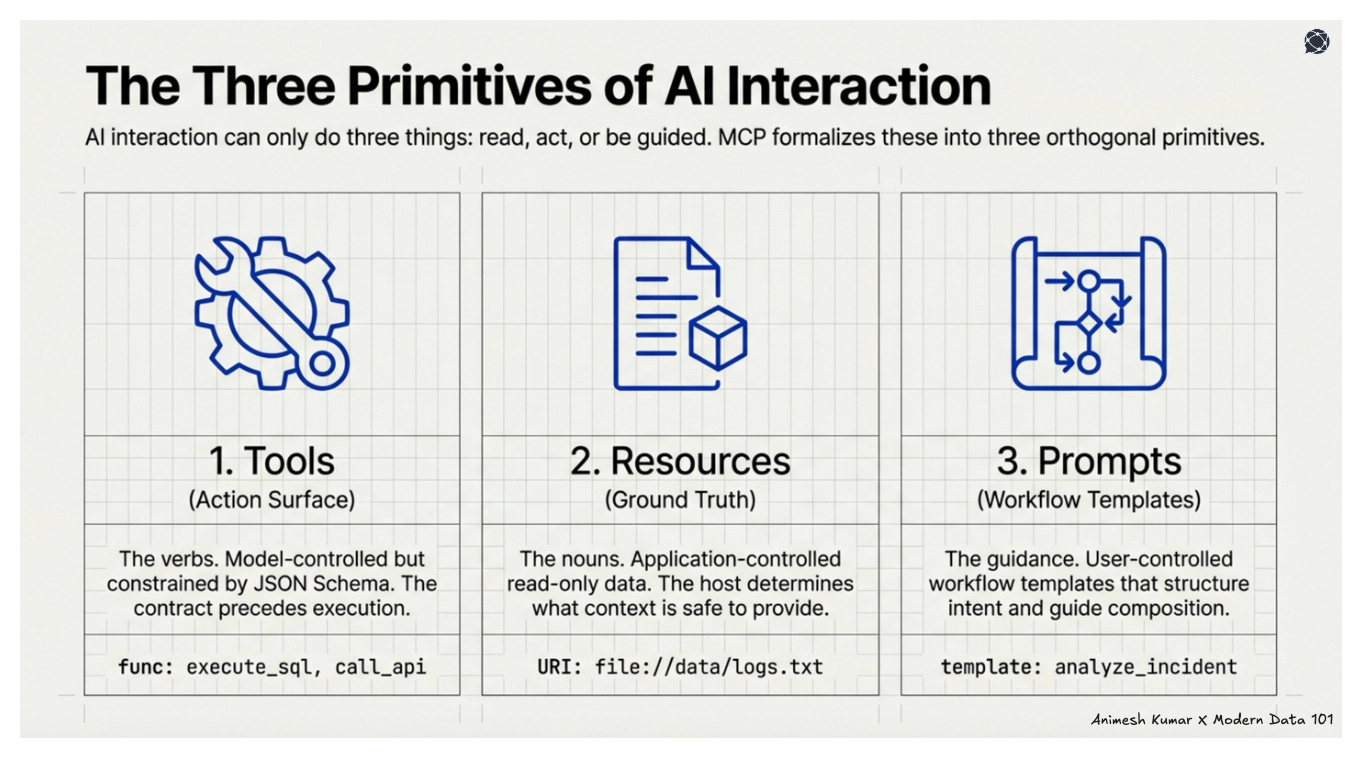

AI interaction can only do three things: read, act, or be guided. Everything else is a composition of these. MCP formalises this into three orthogonal primitives.

These primitives are independent by design. Each has a clear owner, a clear boundary, and a clear responsibility. Together, they form a minimal but complete interaction model.

1. Tools (Action Surface)

Tools are the verbs used by the system. They are executable capabilities that allow intelligence to affect the external world. Like write to a database, call an API, modify a file, trigger a workflow.

They are model-controlled, meaning the model decides when to invoke them. But they are not free-form. They are constrained by typed inputs defined through JSON Schema.

The contract precedes the execution.

Every invocation is auditable. Action is no longer implicit in code hidden behind prompts as it becomes explicit, structured, and reviewable.

2. Resources (Ground Truth)

Resources are the nouns used by the system. They represent passive read-only contextual data: the ground truth against which reasoning occurs. Examples include file contents, database schemas, or API documentation.

They are application-controlled. The host determines what context is relevant and safe to provide, anchoring the model’s reasoning in bounded reality.

3. Prompts (Workflow Templates)

Prompts are like structured intent. They are reusable workflow templates that guide how tools and resources should be composed.

They are user-controlled. Invocation is explicit, not inferred, preserving agency in complex operations. They help structure interactions and provide a consistent way to initiate complex tasks by telling the model how to work with specific tools and resources.

Unified Architecture Pattern Inside MCP

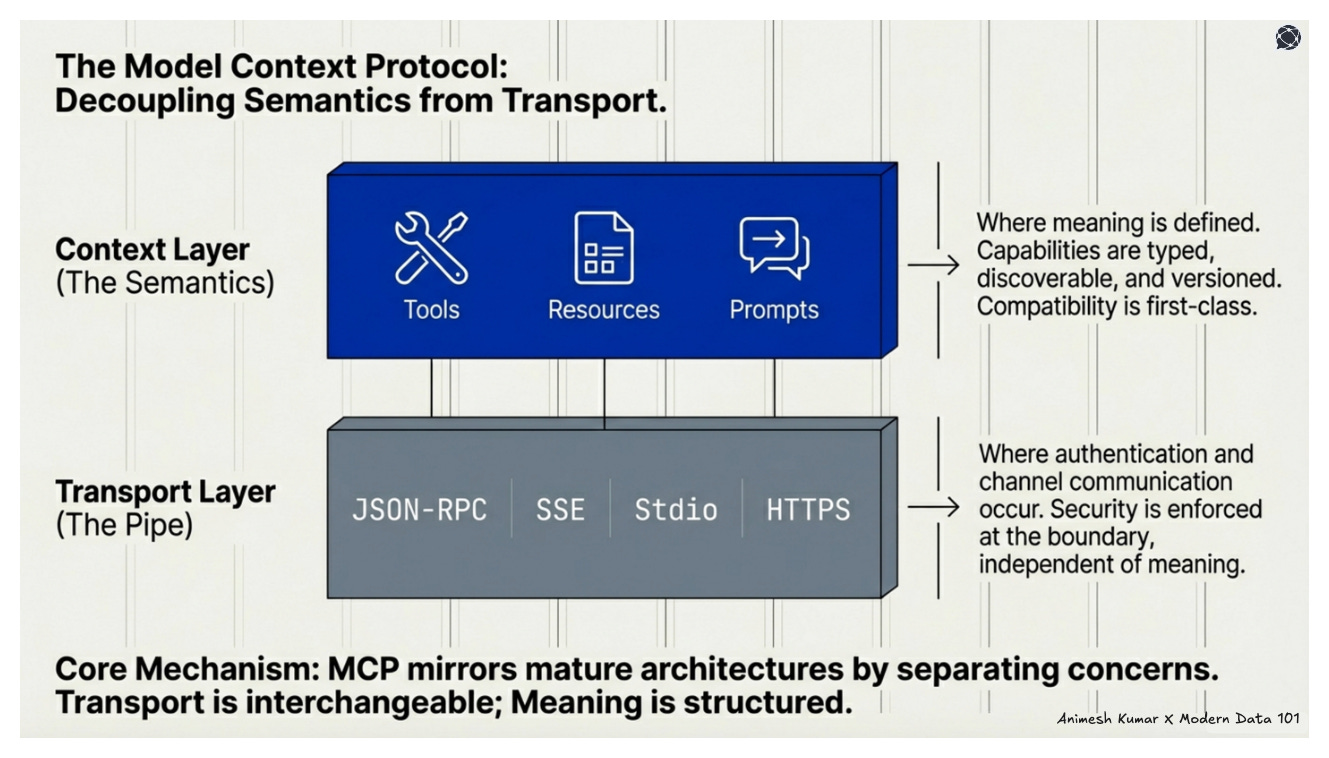

MCP unifies AI interaction across two independent dimensions: semantics and transport. This separation is what allows the protocol to scale without collapsing into coupling.

A. Context Layer (Data Layer)

At the data context layer, MCP defines meaning. It uses a structured message format, ensuring that communication is not ad hoc but typed and predictable.

The MCP primitives (tools, resources, prompts) exist here as structured capabilities. They are discoverable through explicit methods like tools/list and resources/list, which means capability is declared.

Version negotiation also belongs to this layer. Before execution, both sides agree on what they understand. Compatibility becomes a first-class concern rather than an afterthought.

B. Transport Layer

Beneath context and semantics is transport. MCP supports multiple communication channels. Authentication mechanisms operate here. Security is enforced at the communication boundary, independent of semantic meaning. Transport is interchangeable, while meaning is not.

…

By decoupling these, MCP mirrors every mature architecture. Abstraction at the right boundary is what makes a system extensible. MCP applies that approach to intelligence itself.

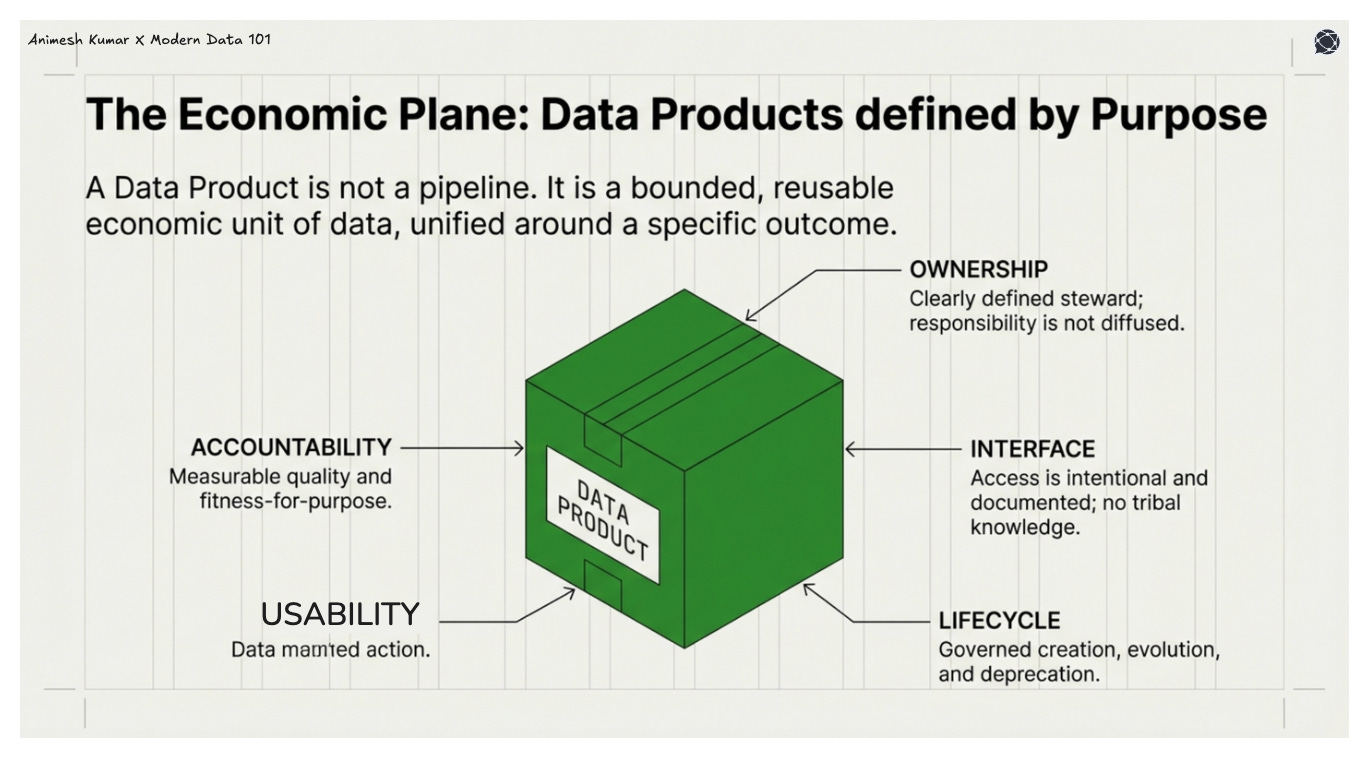

Data Products: The Unified Standard (For Purpose)

A Data Product is unified around purpose.

What Makes a Data Product a Unified Standard

A standard exists when variability is constrained by contract. A Data Product becomes a unified standard when it defines what must remain stable and independent, even as everything around it changes state.

It standardises:

Ownership: There is a clearly defined steward. Responsibility is not diffused across teams or hidden inside pipelines.

Accountability: Quality, availability, and fitness-for-purpose are measurable and enforced.

Interface: Access is intentional and documented. Consumers interact through a defined surface instead of using implicit tribal knowledge.

Consumer obligation: The producer acknowledges the needs of a specific audience. Reuse is designed.

Lifecycle: Creation, evolution, deprecation → each phase is governed. The product has a temporal boundary.

A Data Product is not a pipeline but a bounded, purpose-aligned, reusable economic unit of data.

Architectural Resonance: Data Product ↔ MCP

When two systems are built from sound first principles of data, they will mirror each other. Data Products and MCP are visibly resonating for us because they solve adjacent problems with the same architectural language.

A Data Product defines an interface for consumers. In MCP, that interface becomes a tool schema (a typed contract that intelligence can safely invoke).

A Data Product provides context shaped for a specific purpose. In MCP, that context is formalised as resources.

A Data Product encodes workflows that guide how it should be used. In MCP, prompts structure execution, turning intent into a repeatable interaction pattern.

Ownership in a Data Product establishes bounded responsibility. In MCP, the server boundary enforces controlled scope, defining where authority begins and ends.

Observability ensures that a Data Product can be trusted. In MCP, activity logs and auditable tool calls make every action inspectable.

MCP operationalises Data Products for AI. It translates purpose-bound economic units into machine-consumable contracts.

Where Data Products unify around business purpose, MCP unifies around AI interaction. One defines why value exists. The other defines how intelligence engages with it.

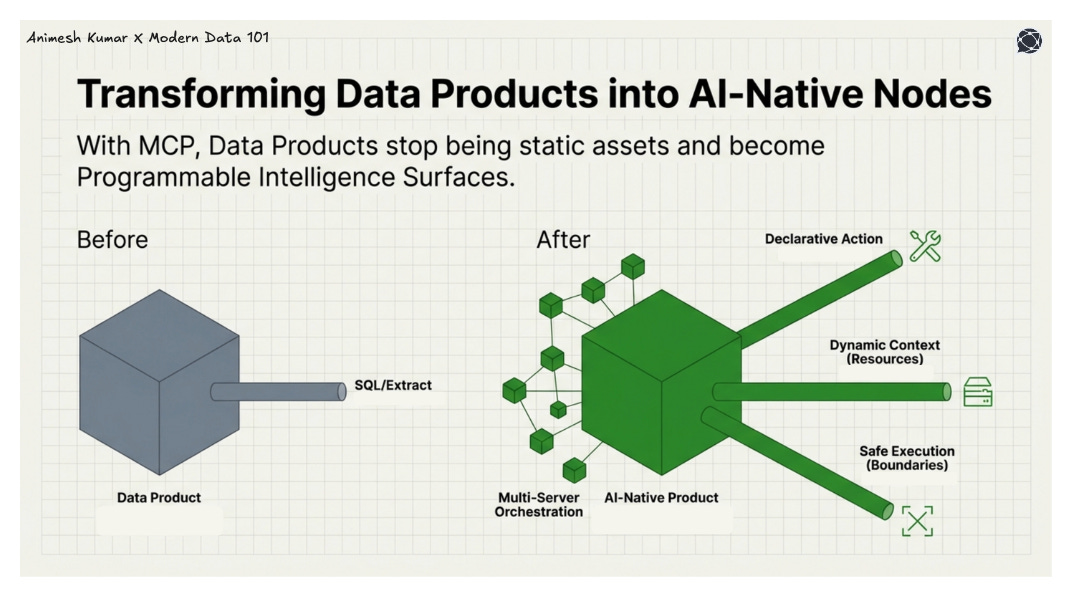

Role of MCP in Data Product Development

If Data Products are built for purpose, they must eventually be built for intelligence. The moment AI becomes a consumer, the interaction surface must be formalised, and MCP provides that formalisation.

MCP enables:

AI-native Data Product interfaces: Instead of wrapping products in ad hoc prompts or brittle middleware, it exposes typed, discoverable contracts that intelligence can reliably invoke.

Declarative action surfaces: Tools are no longer hidden behind code or implicit workflows. They are explicit capabilities with defined schemas and auditable execution.

Safe execution boundaries: Action is constrained by permissions, approvals, and scoped servers, ensuring that intelligence operates within governed limits.

Dynamic context retrieval: Through structured resources and templates, products expose live, parameterised ground truth rather than static extracts.

Multi-server orchestration: A single host can compose multiple Data Products into a unified capability surface, without collapsing ownership boundaries.

With MCP, Data Products become plug-and-play intelligence nodes. The contract is stable, the boundaries are clear, and intelligence can scale without architectural entropy.

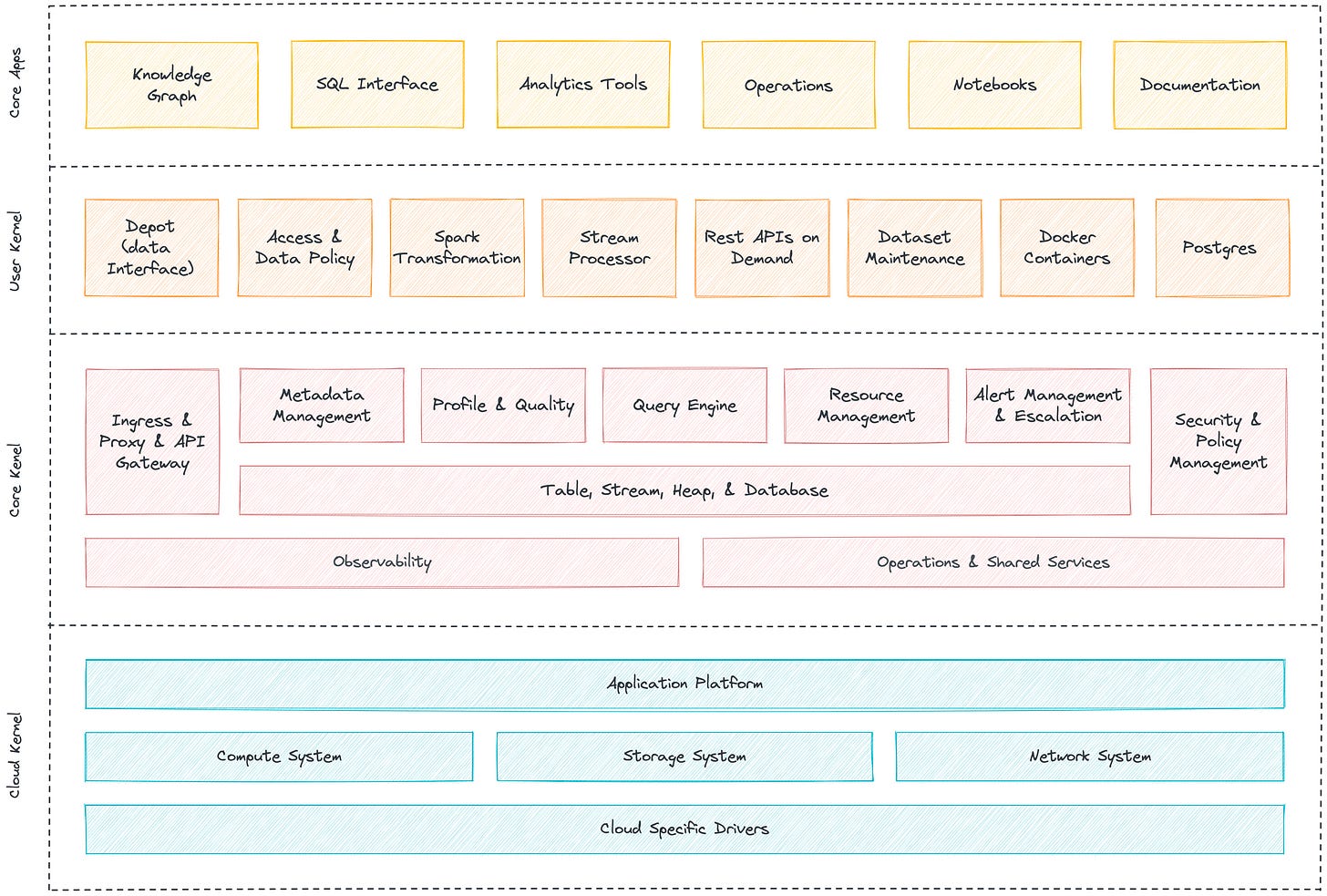

Unified Standard for Builders: DDP

If Data Products unify around purpose, something must unify how they are built. Scale cannot depend on artisanal engineering.

A Data Developer Platform (DDP) standardises the conditions under which Data Products are created, governed, and evolved. It reduces variance at the builder layer so that the purpose can be delivered reliably.

A DDP standardises:

Modelling: Data structures are designed through shared conventions.

Governance: Policies are embedded into workflows rather than enforced retroactively.

Observability: Health, quality, and performance are measurable by default.

Access control: Permissions are embedded and reproducible.

CI/CD: Change is versioned, tested, and promoted.

Environment management: Development, staging, and production operate under consistent rules.

Metadata: Context about data is first-class, not an afterthought.

Product lifecycle: Creation, iteration, and deprecation follow defined processes.

Where Data Products unify purpose, DDP unifies creation and governance. One defines why a product exists. The other ensures it can be built, scaled, and trusted.

DDP’s Core Layers

A Data Developer Platform resolves into distinct layers:

Compute Layer: Where transformations execute, and logic materialises into state.

Storage Layer: Where data persists, versioned and retrievable.

Metadata Layer: Where meaning about data (lineage, ownership, definitions) is formalised.

Orchestration Layer: Where dependencies, timing, and execution order are coordinated.

Governance Layer: Where policy, quality, and compliance are enforced.

Interface Layer: Where products expose their contracts to consumers.

Experience Layer: Where builders and stakeholders interact with the system.

Each layer isolates a fundamental concern. Together, they transform scattered tooling into coherent infrastructure. DDP ensures that builders can reliably produce standardised Data Products.

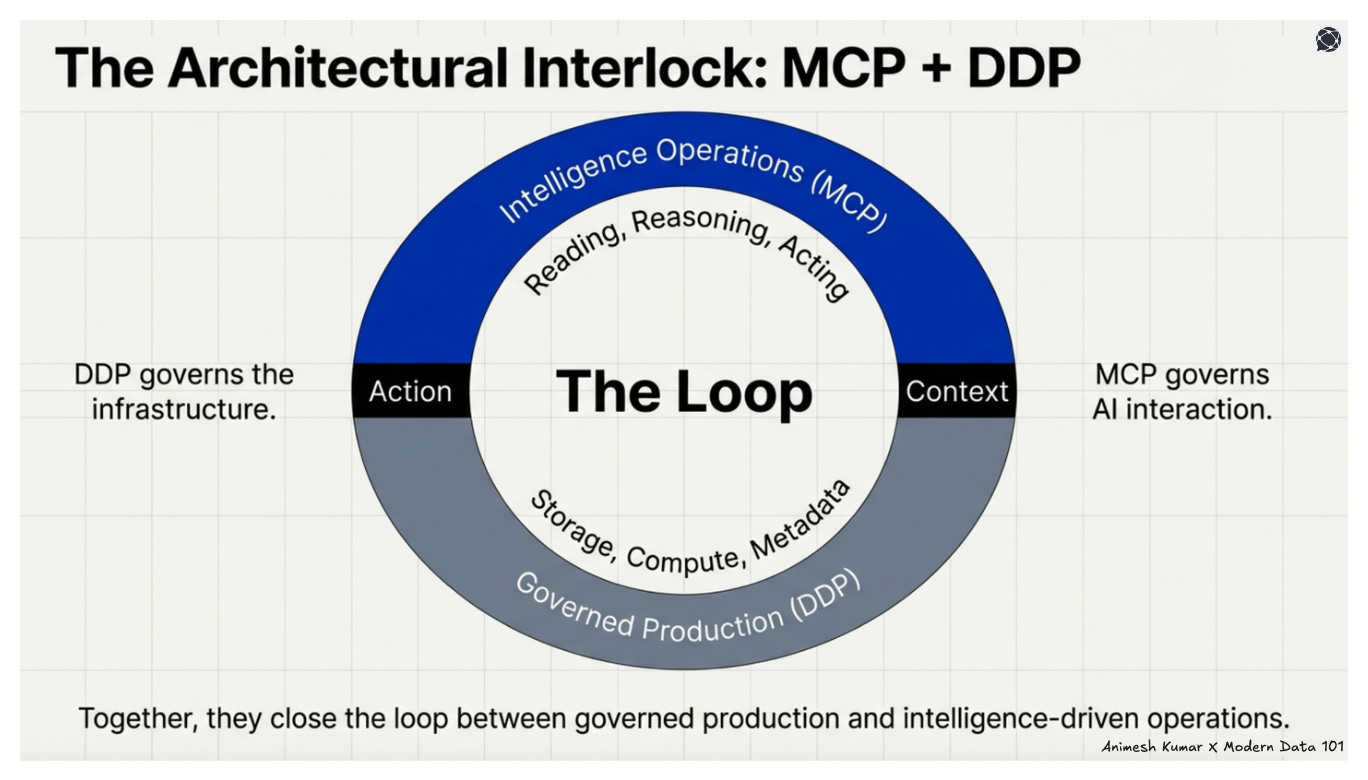

MCP ↔ DDP: Architectural Interlock

MCP connects intelligence to Data Developer Platforms. If DDP industrialises data production, MCP industrialises AI interaction with that production. The two systems interlock at their boundaries.

MCPs standardise how intelligence reads from, reasons about, and acts upon the data developer platform capabilities.

DDP governs the infrastructure. MCP governs AI interaction with it. Together, they close the loop between governed data production and intelligence-driven operations at scale.

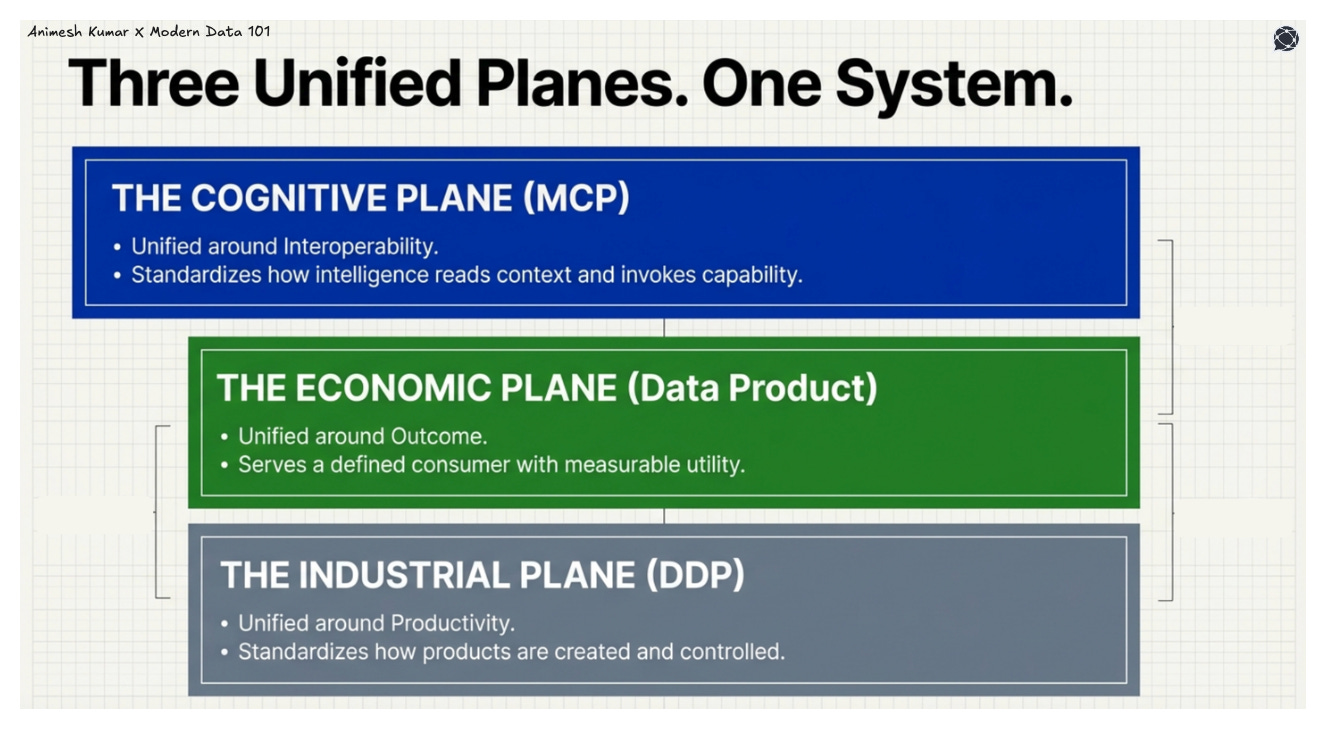

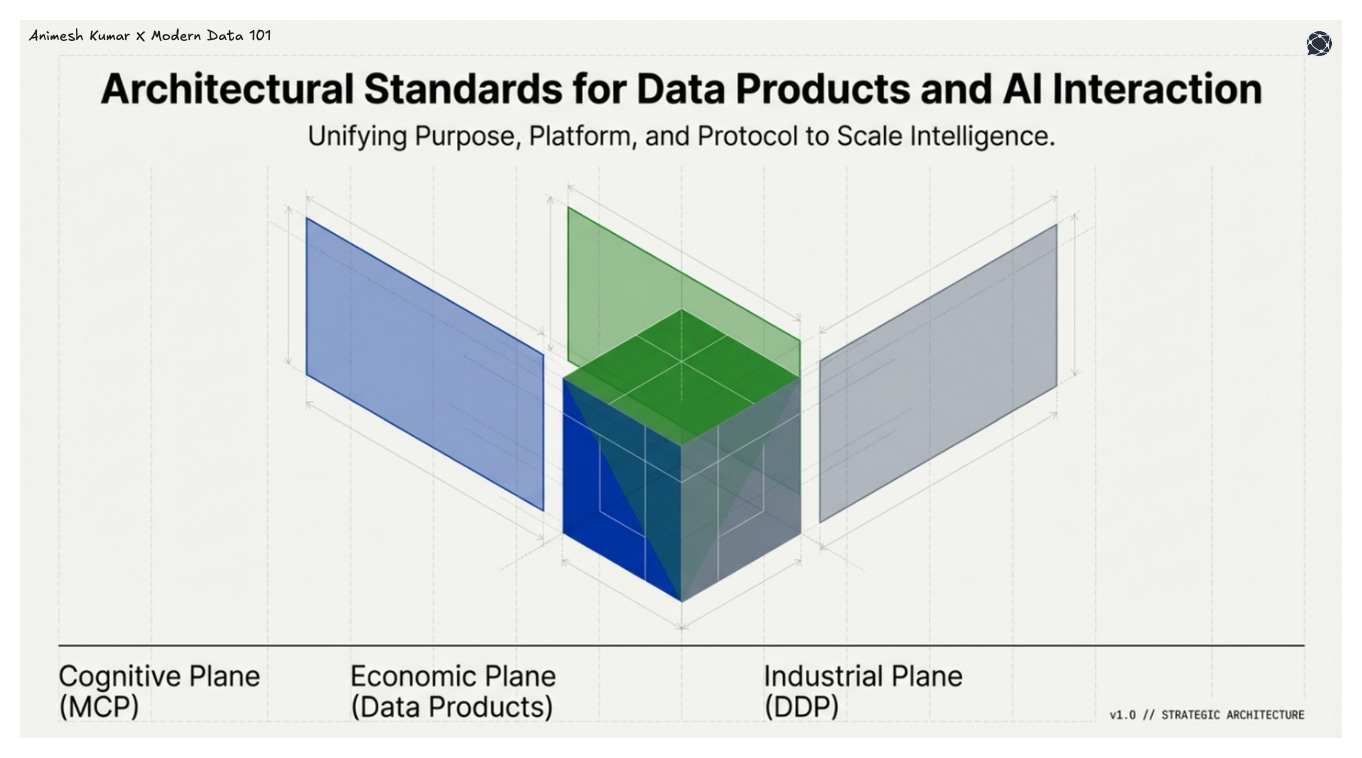

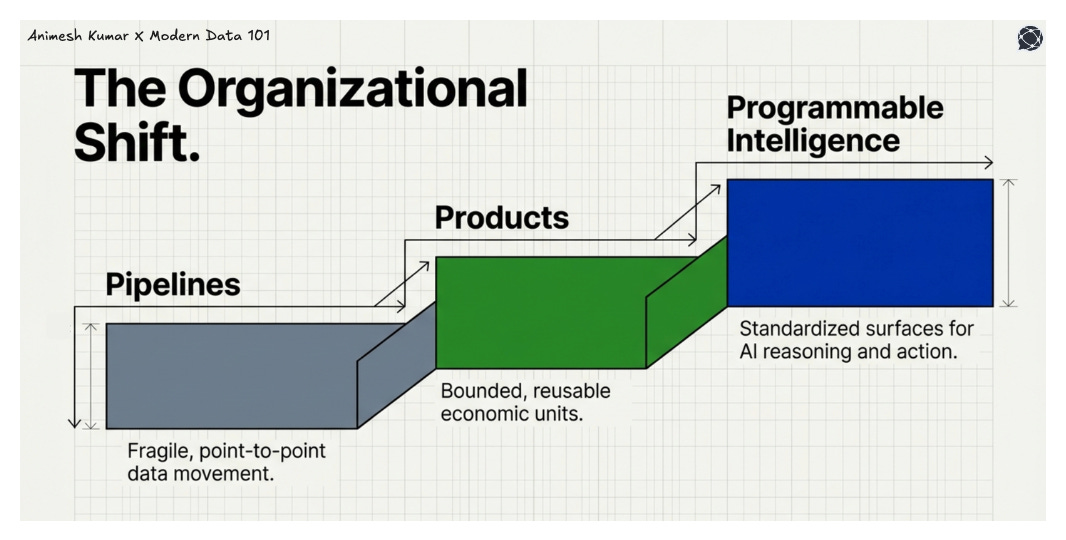

Three Unified Standards, Three Planes

The architecture resolves into three distinct planes. Each plane solves a different problem, yet all follow the same structural logic.

Purpose (Data Product)

The first plane is economic. Data Products are unified around outcome. They exist to serve a defined consumer, with measurable utility and bounded responsibility.

Platform (DDP)

The second plane is industrial. The Data Developer Platform is unified around builder productivity and governance. It standardises how products are created, evolved, and controlled at scale.

Intelligence Interface (MCP)

The third plane is cognitive. MCP is unified around AI-system interoperability. It standardises how intelligence reads context, invokes capability, and operates within boundaries.

Across all three planes, the same architectural language appears:

Interfaces

Contracts

Ownership

Boundaries

Discovery

Versioning

Observability

These are structural requirements of scalable systems which make the convergence click.

Business Purpose → Architectural Language → Direct Value

Architecture is the translation between business intent and operational reality. The resonance of the three systems that manage economic value, infrastructure, and communication with humans and machines enables us to streamline and plan value like never before.

Reduced Integration Cost

A unified AI interface eliminates bespoke connectors. Instead of rebuilding integration logic for every tool and dataset, intelligence interacts through a stable contract. Cost declines because variability is abstracted.

Faster Productisation

When Data Products expose typed interfaces and governed context, they are AI-ready by design. Intelligence does not need to reverse-engineer meaning.

Safer Automation

Automation without boundaries is the most fragile governance model that unfortunately exists in many organisations to date. MCP introduces human-in-the-loop checkpoints, typed tool validation, and scoped execution roots, orchestrated by DDP.

Composable Intelligence

Multiple servers can be orchestrated as a single capability surface. Intelligence no longer operates within isolated silos but across coordinated domains. Composition replaces integration as the scaling mechanism.

Governance Alignment

Permissions operate at the tool level. Every action is logged, auditable, and reviewable. Approvals are structured, not implicit. Governance is embedded in execution.

Platform Leverage

A Data Developer Platform ensures reusable metadata, standardised modelling, and governed data exposure. It stabilises the production layer. MCP ensures standardised AI execution and stabilises the interaction layer.

Final Note

MCP is the standardisation of intelligence interaction. Data Products are economic interfaces tied directly to business value. A Data Developer Platform make the industrialisation layer.

When aligned, the organisation moves from Pipelines to Products to Programmable intelligence surfaces.

Author Connect 💬

Find me on LinkedIn 🙌🏻

Find me on LinkedIn 🤝🏻

MD101 Support ☎️

If you have any queries about the piece, feel free to connect with the author(s). Or feel free to connect with the MD101 team directly at community@moderndata101.com 🧡

From MD101 team 🧡

🌎 Global Modern Data Report 2026

The Modern Data Report 2026 is a first-principles examination of why AI adoption stalls inside otherwise data-rich enterprises. Grounded in direct signals from practitioners and leaders, it exposes the structural gaps between data availability and decision activation.

With hundreds of datapoints from 500+ data leaders and experts from across 64 countries, this report reframes AI readiness away from models and tooling, and toward the conditions required and/or desired for reliable action.

Great abstract, thanks!

ZH

Excellent piece. The three-plane framing — Data Products for economic outcomes, DDP for industrialisation, MCP for AI interaction — is sharp and well-argued.

One question it raised for me: if every AI interaction costs tokens, and the agent needs meaning before it needs tables, should deterministic semantics live at a foundational layer rather than at the app layer? Wrote a longer reaction on LinkedIn exploring this — would love your take. https://www.linkedin.com/posts/nishant-sharma-73b3852_animesh-kumar-and-travis-thompson-s-latest-share-7430073119295406080-Lphm